This is Part 4 of the "So You Want to Add AI Into Your App" series. Part 1 covered whether you're a consumer, integrator, or trainer. Part 2 covered architecture decisions you can't reverse. Part 3 covered which security controls work and which ones don't. If you've built tenant isolation, implemented intent-based access control, and set up audit logging from those earlier parts, you already have most of what compliance frameworks ask for. This article covers how to document that work in terms your auditor and enterprise buyers recognize.

A slack message nobody is ready for

"Our auditor/prospect/customer is asking about AI controls and we don't have answers."

Every company gets this request at some point. The wording and the timing varies but the anxiety is the same.

Or it's a prospect's security questionnaire with a section on ISO 42001, a standard nobody on the team has heard of. Or sales forwarding a message because the buyer wants your position on the EU AI Act or their state's AI Security Framework before they move forward.

The instinct is to treat this as a new compliance project. Hire a consultant. Build a fresh program. Spend months writing documentation for something that's been running in production for a year.

But that's expensive. We've also seen where a company has already built a product considering tenant isolation, deterministic access control, audit logging, and security controls then spends too much time building a compliance program that describes those same controls in language an auditor recognizes. The architecture was there and they paid someone to rediscover it.

Compliance for AI integrators is a translation problem at these early days. If you followed the architecture guidance from earlier in this series, you have most of the controls. What you might be missing is thorough documentation and evidence mapping. You've likely built what you need but now you need to describe that.

Compliance Frameworks, Ranked by Whether They'll Affect You

Most compliance guidance treats every AI framework as equally important. But they aren't. Some are mandatory, some are optional differentiators, some sound important but won't affect your business for years, if ever. Here's what each framework requires of an integrator (not a model builder or research lab) and what it's worth to you in practice.

SOC 2

No AI-specific trust services criteria exist - the AICPA hasn't published any (yet). There is no section labeled "AI controls" in the SOC 2 framework. Your auditor is applying existing criteria that were written before LLMs were in production use.

The ones that tend to get pulled in for AI features: logical access (CC6.1, CC6.3, CC6.7) for how data reaches the model and who can invoke it, monitoring and incident handling (CC7.2, CC7.3, CC7.4) for the AI audit trail, change management (CC8.1) for model and prompt changes, vendor management (CC9.2) for the model provider, and — for reports that carry the Confidentiality TSC — C1.1 and C1.2 for identifying, protecting, and disposing of confidential data that passes through the pipeline. Processing Integrity (PI1.1) could also apply if the report carries it, but most SaaS integrator reports don't.

What auditors ask in practice: How do AI features handle customer data? What controls exist around the AI pipeline? Can you demonstrate monitoring of AI outputs? Do you have an inventory of your model and AI components?

These are reasonable questions, and if you've built your AI pipeline with proper tenant isolation, you can answer them. The auditor is checking whether your existing controls extend to AI systems, not whether you've built a separate AI compliance program.

Most enterprise risk functions require their vendors and partners to have SOC 2. Adding AI coverage to your existing audit is incremental work. Check out more at https://startupsoc2.fyi/

ISO 42001

ISO 42001 covers governance, risk assessment, and lifecycle management for AI systems. Written broadly enough to cover foundation model companies and AI-enabled consumer products.

For integrators, most of the training data and model development requirements don't apply since you're calling an API, not building a model. What does apply: AI governance structure, risk assessment for your use cases, data management for what you feed models, and lifecycle documentation for AI features.

Adoption is still early. When Miro got certified, it was industry news because few companies have done it. Auditor expertise is still thin, which means your assessor may be learning the standard alongside you. But the upside is that certification puts you ahead of nearly all competitors in enterprise sales. The downside: without careful scope definition, the standard is broad enough to consume considerable time and effort on requirements that don't apply to integrators.

NIST AI RMF

Published by NIST, the AI Risk Management Framework has four functions: Govern, Map, Measure, Manage. It's free and not a certification - you can't pass it. But you can align to it and document that alignment.

This framework is a useful starting point for integrators, and not just due to the framework itself, but because Govern/Map/Measure/Manage maps cleanly to both ISO 42001 and SOC 2. If you organize your evidence around these four functions, you're building a structure that serves multiple frameworks at once. That makes it a good entry point for companies that need to support several compliance requirements without building separate programs for each.

It's also the framework most referenced in pending US legislation, which makes it the one government-adjacent buyers recognize.

AIUC-1

Commercial framework with commercial interests, but the first compliance standard written for autonomous AI agents. It includes requirements across privacy, security, safety, and reliability. Quarterly adversarial testing is required.

If you have agentic features, the questions AIUC-1 asks are the questions your buyer's security team will ask about agents within the next year. Having answers demonstrates a level of understanding that generic ISO 42001 alignment can't match. If you built intent-based access control and agent audit logging from Part 3, you have the controls. AIUC-1 gives you the language to present them. Check out the (unafilliated) navigator at https://adversis.github.io/aiuc1-navigator/.

EU AI Act

High-risk system enforcement starts August 2026. Penalties up to 35 million EUR or 7% of global revenue.

Most SaaS integrations that use AI for summarization, search, or content generation probably fall outside the high-risk definition. But if your AI features influence hiring, credit scoring, healthcare decisions, education assessment, emotional evaluation, or critical infrastructure, you're likely in scope. The line isn't always clean. A high level summary can be found here.

If you serve EU customers, figure this out soon. The documentation requirements for high-risk systems can take some time to build.

CIS Controls

No AI-specific controls yet. But existing controls map nicely - controls 3, 4, 6, and 16 (data protection, secure configuration, access management, application security) apply to AI pipelines. If you already map to CIS, extending coverage is a documentation exercise. A useful, tactical framework in general but regarding AI, not where most integrators need to spend their time. See the companion guide here.

Colorado AI Act

This applies to any company with Colorado customers, not just Colorado-based companies. If your AI features influence employment, lending, insurance, housing, education, or criminal justice decisions, you're in scope regardless of where you're headquartered.

Requirements include impact assessments, disclosure obligations, and risk management documentation. If your AI touches high-risk decision categories and you have US customers, keep tabs on this one. Undergoing adjustments as of this writing early 2026.

You already have most of the evidence

If you built the architecture from Parts 2 and 3, here is the backward mapping from your controls to framework requirements.

Tenant isolation from Part 2 (your per-tenant vector namespaces and pre-retrieval access gating) maps directly to ISO 42001 data governance (Annex A.7), SOC 2 logical access and transmission criteria (CC6.1, CC6.3, CC6.7) - and C1.1 if the report carries Confidentiality - and EU AI Act data governance. When the auditor asks how you prevent cross-tenant data leakage in AI responses, your architecture is your answer. You'll also need a data flow diagram showing how tenant boundaries work at each stage of the pipeline, plus evidence that you test those boundaries.

Intent-based access control from Part 3, the IBAC policy engine, permission boundaries, and decision logging, maps to AIUC-1 permission requirements, ISO 42001 access governance, and SOC 2 logical access (CC6.1, CC6.3). What you need is policy documentation, examples of boundaries in action, and authorization decision logs.

Audit logging from Part 3, your structured audit trail, maps to SOC 2 monitoring and incident-handling criteria (CC7.2, CC7.3, CC7.4), EU AI Act transparency, and NIST AI RMF Measure. Every logged interaction, decision trace, and input/output pair is good evidence. What you need is retention policies, log integrity controls, and the ability to produce an audit trail for any specific interaction.

Data residency from Part 2 maps to GDPR data processing and EU AI Act data governance. What you need is documentation of where (logically and physically) data is processed, stored, and which providers receive what.

Rate limiting from Part 3 maps to NIST AI RMF Manage. What you need is documented limits, alerting thresholds, and response procedures.

Companies that built reasonable security architecture have a majority of compliance evidence already being generated by production systems. The remaining portion is where your real work sits.

Four gaps you should close

These are the things the architecture patterns above don't produce on their own and no backward mapping covers them.

Model inventory

Right now, can you tell me which models are in production? What version each is running? What data each one can access? Who approved each deployment? When each was last evaluated?

Many teams can't, and don't have it documented in one place. The AI feature shipped, the demo worked, the team moved on. Nobody maintained a registry of what's running and to find out you need to dig through code. This is a common gap, and it's virtually required by every framework above. ISO 42001 requires it explicitly. SOC 2 auditors ask for it. NIST AI RMF depends on it. CIS controls start with inventory.

The fix is simple, though. A spreadsheet with columns for model name, provider, version, deployment date, data access scope, owner, and last review date. Update it when models change.

AI-specific risk assessments

Not a generic risk register with "AI" in the title. A structured assessment that asks: What decisions does your AI influence? What happens when it's wrong? What's the blast radius? Who is harmed? How would you detect the failure?

AI failures look different from application failures. A traditional application breaks and throws errors. An AI feature produces confidently wrong output that looks correct which someone can act on. An agent discloses information it should not, runs tool calls and exfiltrates data, or drifts outside its intended scope slowly enough that nobody notices until the damage is done. Your risk assessment needs to account for data exfiltration, hallucination, bias or ethical concerns, agentic scope creep, and wrong outputs that get acted on downstream.

NIST AI RMF's Map function gives you some structure. Enumerate use cases. Identify potential harms per use case. Assess likelihood and severity. Document treatments. If you don't have one, spend an afternoon thinking through it. If you have a generic one, make it specific to what your AI features actually do. LLMs are decent at helping you think through these risks and documenting these. Keep in mind if you document it as a risk, you might get asked how exactly you've addressed it.

One important distinction: you're assessing the risk of your integration, not the risk of the model. You don't control the model's training data. You control what customer data you expose to it and what decisions you let it influence.

Bias and fairness testing

Even though you didn't train the model, you do inherit its biases. If your AI makes recommendations that affect customer outcomes, it's worth trying to test for disparate impact. Colorado AI Act requires it for high-risk decisions. EU AI Act requires it for high-risk systems.

For integrators, this doesn't require an ML fairness or ethics team necessarily, but it does mean documenting what recommendations your AI makes, testing representative scenarios across demographic categories, and recording results. If you use the model for classification or scoring, check whether outcomes differ meaningfully across protected classes.

The compliance bar is evidence of diligence, not perfection. No model is bias-free. Frameworks require that you tested, documented what you found, and have a plan for any identified problems.

AI-specific incident response

Most IR plans cover data breaches. Does yours cover prompt injection exploitation? Cross-tenant leakage through AI responses? Hallucination causing customer harm? Agent semantic drift?

These are different incident types. Prompt injection doesn't look like SQL injection on your SIEM or app logs. Cross-tenant leakage through AI leaves different forensic artifacts than a database breach. Changes in agent responses over time and models is gradual, not one event. Each needs different detection methods, different response procedures, different escalation paths.

Add AI scenarios to whatever IR plan is in place. Define what counts as an AI security incident versus a performance issue. Assign owners. Run a tabletop on at least one scenario. This is typically a hours or a day of work, not weeks.

How to sequence this

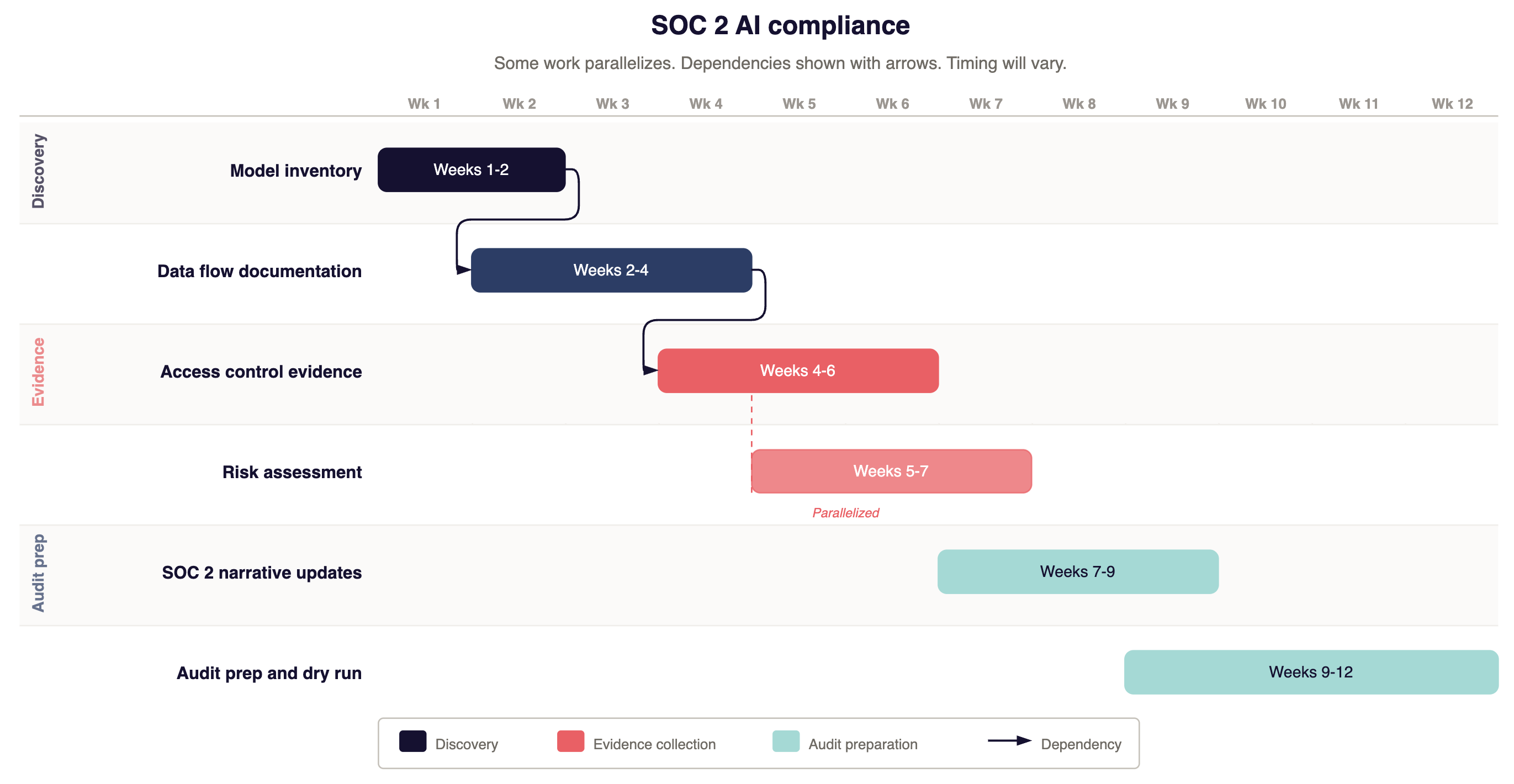

SOC 2 audit

Weeks 1: Build the model inventory. Every model in production, what data it accesses, who owns it. This is the auditor's first question and it feeds everything else.

Weeks 2-4: Document AI data flows. Diagram how customer data moves through the AI pipeline. Show where tenant boundaries exist, where data is encrypted, where data exits your environment to model providers. Include the failure modes you've designed for, not just the happy path.

Weeks 3-6: Compile access control evidence. Export IBAC policies, document the permission model, show how AI feature access is restricted and for whom.

Weeks 4-9: Update your SOC 2 system description to include AI. Add AI language to the logical access (CC6), monitoring (CC7), change management (CC8.1), and vendor management (CC9.2) narratives — and to Confidentiality (C1.1, C1.2) if the report carries it. Prepare the narrative for how existing controls extend to AI operations.

Weeks 8-12: Have someone who didn't build it review the evidence. Prepare for common auditor questions. Dry-run with your auditor if they'll do it.

This should cover the 80/20 that keeps AI from stalling your audits and quesitons.

Starting from zero

Use NIST AI RMF as the organizing framework. It's free, it maps to everything else, and the four-function taxonomy is the cleanest structure available.

First, model inventory first (since you can't govern what you can't list), then data flow documentation, then access control evidence, then risk assessment, then incident response procedures.

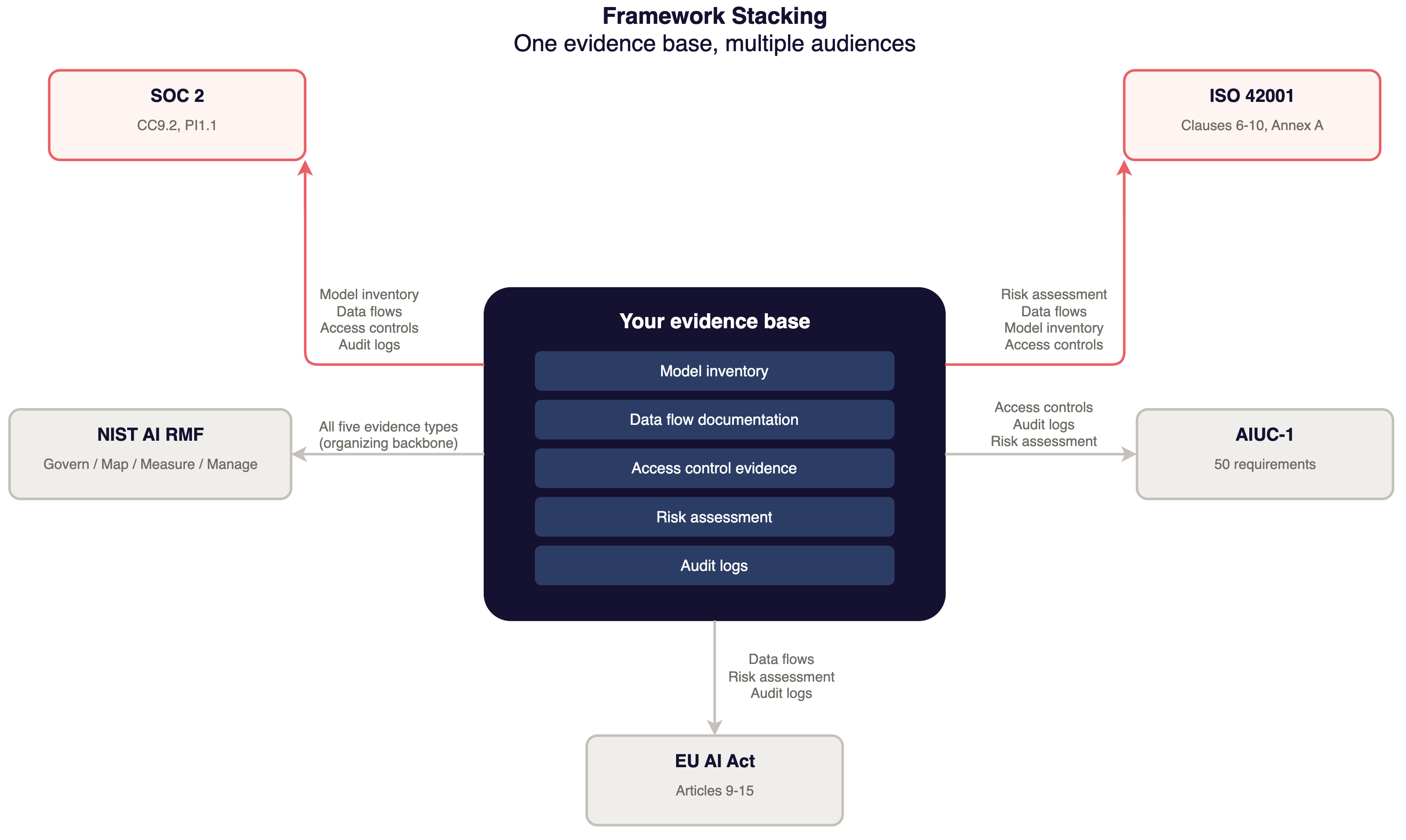

Framework stacking: stop describing the same controls three times

If you treat SOC 2, ISO 42001, and NIST AI RMF as three independent projects, you create three model inventories, three risk assessments, and three evidence repositories. Same controls, different formatting, triple the maintenance.

How the frameworks feed each other

ISO 42001 generates SOC 2 evidence. Your AI risk assessment from ISO 42001 clause 6.1 is the controls narrative your SOC 2 auditor needs. Your lifecycle documentation from Annex A demonstrates access control, change management, and — if the report carries Confidentiality — confidential data handling. Write it for ISO 42001, extract a subset for SOC 2.

NIST AI RMF organizes everything. Govern maps to ISO 42001 governance (clause 5) and SOC 2 control environment (CC1). Map feeds risk assessments for both. Measure aligns with the SOC 2 monitoring criteria (CC7.2) and the equivalents in ISO 42001 and EU AI Act. Manage maps to risk treatment and incident response (CC7.3, CC7.4). This is a useful backbone for a unified evidence base.

AIUC-1 evidence supports ISO 42001 agent controls. If you document agent permissions, testing cadence, and safety controls for AIUC-1, the same documentation supports ISO 42001 lifecycle management and SOC 2 logical access and monitoring criteria.

What this looks like in practice

One repository or folder with your model inventory, data flow diagrams, access control matrices, risk assessments, bias testing results, incident response procedures. When your auditor asks, you can pull from this repository and format for their requirements. When an enterprise buyer sends an ISO 42001 questionnaire, you map from the same repository to ISO clauses. When you need NIST AI RMF alignment documentation, same evidence, different taxonomy.

If you're describing the same controls in three formats for three frameworks with no shared source, fix that before doing anything else, of course.

Our AI Questionnaire Answer Bank has pre-written answers for AI compliance questions at two maturity levels. It's a useful starting point for building an evidence library that maps to multiple frameworks.

Where you stand on TRACTION

A quick check against the TRACTION framework:

L1: No AI compliance posture. SOC 2 exists but nothing covers AI. Auditor hasn't asked yet. Buyers are asking and you're improvising.

L2: Model inventory exists. Basic documentation. You can list your models, describe data flows, point to access controls. You handle questionnaire basics but don't have structured risk assessments.

L3: Structured risk assessment, evidence mapped to frameworks, audit-ready. Your evidence base maps to SOC 2, ISO 42001, and NIST AI RMF. AI-specific IR procedures exist. You can answer detailed questions with documentation behind them. This is the target for most integrators.

L4: Continuous compliance monitoring. Evidence is a byproduct of operations. Model inventory updates trigger from deployments. Risk assessments follow a defined cadence. New frameworks are formatting exercises, not new projects.

This week

Build your model inventory and data inventory. Every model in production, what it accesses, who owns it, what version, what it's processing. This single artifact will get you started on unblocking your compliance conversations.

Map backward from your architecture. If you built tenant isolation, access control, and audit logging from this series, map those to ISO 42001, SOC 2, and NIST AI RMF. Many teams will find they are further along than they thought.

Pick one framework entry point. SOC 2 audit coming? Start there. Need an organizing structure? NIST AI RMF. Want an enterprise differentiator? ISO 42001.

Stop duplicating. If you have the same control documented separately for multiple frameworks with no shared source, consolidate those.

The compliance landscape for AI will keep shifting. New regulations will appear. Existing standards will mature. Auditor expectations will evolve. But the approach stays the same:

- know what you're running

- document how it works

- prove it does what you say.

If you build the evidence base right, every new framework is a straightforward translation exercise.

Next in this series: Part 5 covers what happens when enterprise buyers ask questions that go beyond any framework, and how to build the security narrative that survives scrutiny.