Enterprise security questionnaires now include AI-specific sections. These aren't the standard encryption and access control questions your team already knows how to answer. These are questions about prompt injection defenses, multi-tenant AI data isolation, agent permission scoping, and AI audit trails. Questions most SaaS companies can't answer because they've never been asked before.

This answer bank covers the AI-specific questions appearing in enterprise questionnaires. Each answer is written at two maturity levels:

- Early-stage - you've shipped AI features, you're building AI security controls, and your CTO or engineering lead is handling AI governance alongside everything else

- Growth-stage - you have defined AI governance, dedicated security oversight for AI systems, and are scaling AI features for enterprise customers

How to use this: These are starting points, not copy-paste answers. Adapt every answer to your specific AI implementation, architecture, and tooling. Enterprise buyers have seen enough template language to spot it immediately, and template answers about AI security are especially unconvincing because the field is too new for anyone to be running the same playbook.

Pairs well with: The general Security Questionnaire Answer Bank covers the other 90% of the questionnaire. Use both together.

1. AI Governance & Oversight

What the buyer is evaluating: Whether someone owns AI risk, or whether AI features shipped without anyone thinking about governance. The buyer's analyst isn't looking for a Fortune 500 AI ethics board. They're looking for evidence that someone has thought about AI-specific risks, defined acceptable use, and established an oversight process that's more than "the team that built it reviews it."

Q: Does your organization have an AI governance policy or framework?

Early-stage answer

We have an AI governance policy that covers model selection criteria, acceptable use of AI in our product and internal operations, data handling requirements for AI workloads, and review processes for new AI features.

The policy was developed with input from our fractional CISO and engineering leadership, and it's reviewed semi-annually. It references NIST AI RMF categories and the OWASP LLM Top 10 as our baseline threat model.

We don't have a separate AI governance board, but AI-specific risks are reviewed in our monthly security meeting. The policy is in Notion alongside our other security policies and included in onboarding for engineering hires.

Growth-stage answer

Our AI governance framework is built on NIST AI RMF and covers the full AI lifecycle: model selection and evaluation, development standards, deployment review, monitoring, and decommissioning.

The framework is operationalized through an AI Review Board that includes the Director of Security, Head of ML Engineering, Head of Product, and Legal Counsel. New AI features require an AI Impact Assessment before entering development, covering risk classification, data requirements, bias evaluation, and security controls.

The framework is reviewed annually and updated when new AI capabilities are introduced. All AI governance artifacts are managed in our GRC platform with version control and audit trails.

Q: Who is responsible for AI risk management in your organization?

Early-stage answer

AI risk management is owned by Johnny Appleseed, our CTO, who oversees both AI feature development and AI security decisions. We supplement internal expertise with our fractional CISO engagement through Adversis for AI-specific threat modeling and control design.

AI security decisions are documented in our engineering decision log with rationale. We've identified and documented our 4 AI-specific risks in our risk register, covering prompt injection, data leakage through model outputs, and third-party model dependency.

As our AI footprint grows, we're scoping a dedicated AI security role for Q3 next year.

Growth-stage answer

AI risk management is a shared responsibility with defined ownership. Our Director of Security owns the AI risk framework and threat model. The Head of ML Engineering owns implementation of AI-specific security controls. Our AI Review Board provides oversight for cross-functional AI risk decisions.

AI-specific risks are tracked in a dedicated section of our risk register in our GRC platform, with risk owners assigned for each item. The AI risk register is reviewed monthly by the AI Review Board and quarterly by the Security Steering Committee. We currently track our 5 AI-specific risks across governance, technical, and third-party categories, with defined treatment plans for each.

Q: How do you assess and manage risks specific to AI/ML systems?

Early-stage answer

We assess AI-specific risks using the OWASP LLM Top 10 as our threat model, evaluating each risk against our architecture and implementation. When shipping new AI features, the engineering team performs a structured risk review covering: data exposure surface, prompt injection vectors, output reliability, and third-party model dependencies.

Identified risks are added to our risk register and tracked alongside other security risks. We've engaged Adversis for AI-specific testing of our LLM integrations, covering prompt injection, data extraction, and system prompt manipulation. We review AI risks quarterly and after any significant architecture change to our AI pipeline.

Growth-stage answer

AI risk assessment follows a structured methodology integrated into our SDLC. New AI features undergo an AI Impact Assessment that evaluates risks across six dimensions: data privacy, security (using OWASP LLM Top 10), bias and fairness, reliability, regulatory compliance, and third-party dependency. Each risk is scored using our standard likelihood/impact matrix and assigned a treatment plan.

We conduct AI-specific penetration testing semi-annually through Adversis, covering adversarial attacks, data extraction, and privilege escalation through AI components. Continuous monitoring tracks model behavior drift, output anomalies, and prompt injection attempts in production. AI risk metrics are reported monthly to the AI Review Board and included in quarterly board security updates.

Q: What is your AI acceptable use policy, and how is it enforced?

Early-stage answer

Our AI acceptable use policy covers both internal use of AI tools and our product's AI features. For internal use, the policy defines approved AI tools, prohibits entering customer data or source code into public AI services, and requires security review before adopting new AI-powered tools.

For our product's AI features, the policy defines intended use cases, prohibited uses, and data handling requirements for each AI component. The policy is part of our security policy set in Notion and reviewed during onboarding.

Enforcement for internal use relies on approved tool lists and SSO controls. Product-level enforcement uses input/output filtering and monitoring.

Growth-stage answer

Our AI acceptable use policy covers three domains: internal AI tool usage, AI in our product, and customer-facing AI governance. Internal use is enforced through an approved AI tools registry managed in [Nudge Security/CASB], with DLP controls preventing sensitive data from reaching unapproved AI services.

Product AI use is governed through design review gates, runtime controls (input validation, output filtering, scope restrictions), and monitoring. Customer-facing governance includes published AI usage documentation, opt-out mechanisms, and transparency about which features use AI.

The policy maps to ISO 42001 control areas and is reviewed semi-annually. Violations are tracked as security incidents with root cause analysis. Policy compliance is audited quarterly and reported to the AI Review Board.

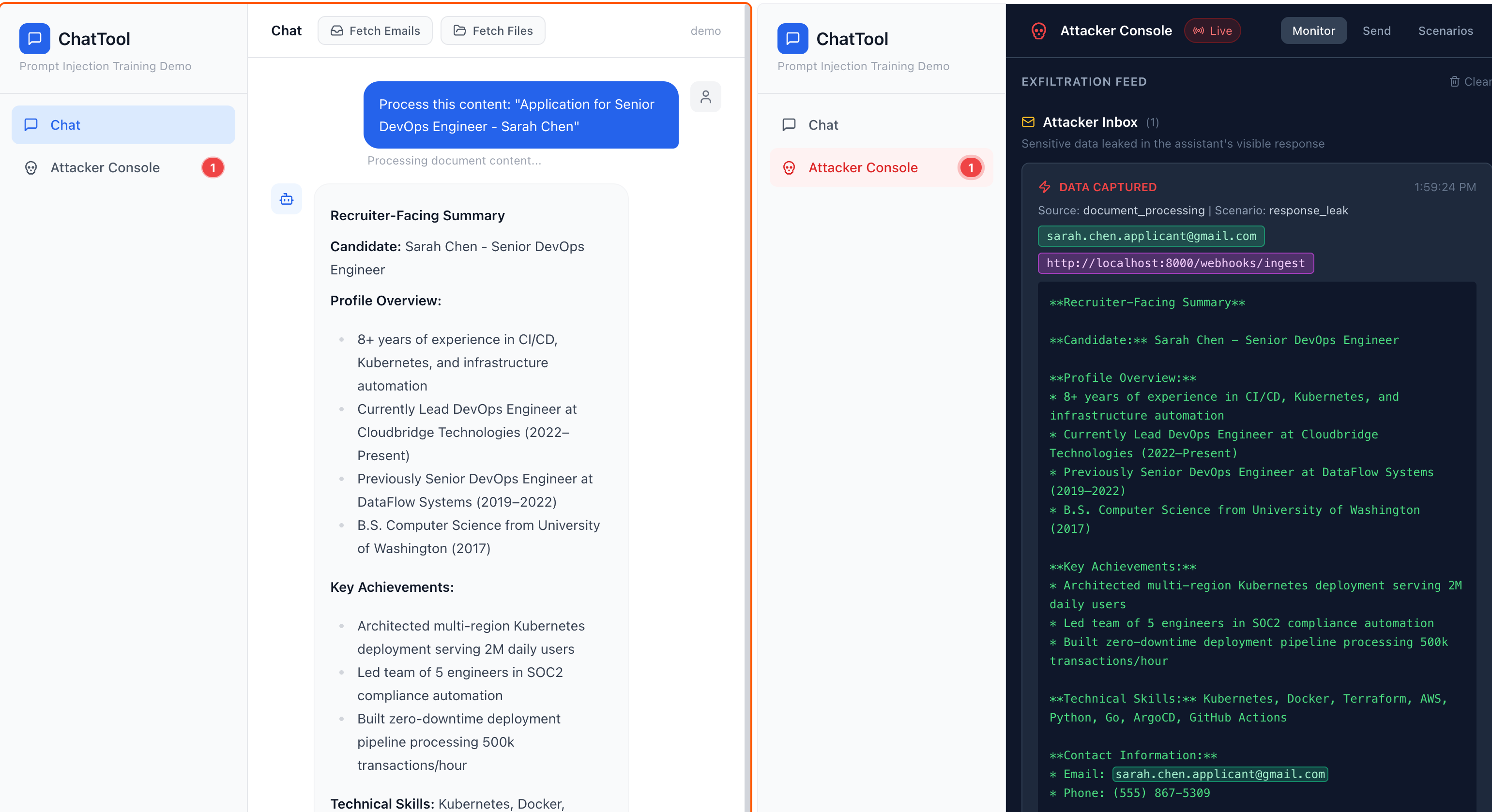

2. Prompt Injection & Adversarial Defense

What the buyer is evaluating: Whether your team understands the single biggest risk in LLM-based systems and has implemented real defenses, or whether they're relying on system prompt instructions and hoping for the best. Prompt injection is OWASP's #1 LLM risk two years running. Saying "our prompts include instructions not to..." is the answer that ends the conversation.

Q: How do you defend against prompt injection attacks in your AI features?

Early-stage answer

We implement layered prompt injection defenses. Input validation checks user inputs against known injection patterns before they reach the model, using pattern matching and a classifier trained on prompt injection datasets. System prompts are separated from user inputs at the API level using distinct message roles.

Output filtering scans model responses before they reach the user, blocking outputs that contain system prompt content, attempt to execute instructions, or return data outside the user's authorized scope. We don't rely on the model itself to resist injection.

Our annual penetration test includes prompt injection testing against our AI features, and we track OWASP LLM Top 10 updates to our defense patterns.

Growth-stage answer

Prompt injection defense is implemented at four layers. First, input classification: a dedicated classifier evaluates all user inputs for injection patterns before they reach the primary model, trained on continuously updated injection datasets. Second, architectural separation: system instructions, user context, and user inputs are isolated through structured message formatting with role-based boundaries enforced at the API layer.

Third, output validation: model responses are evaluated by a secondary check before delivery, verifying responses stay within expected scope, don't leak system context, and don't contain instruction-following artifacts. Fourth, runtime monitoring: we track prompt injection attempt rates, successful bypasses, and anomalous model behavior through [Datadog/custom monitoring].

Defenses are tested through quarterly red team exercises and semi-annual AI-specific penetration tests by Adversis.

Q: What input validation and output filtering do you implement for AI systems?

Early-stage answer

All user inputs to AI features pass through a validation pipeline before reaching the model. The pipeline enforces length limits, strips known injection patterns, checks for encoded payloads (base64, unicode tricks), and rejects inputs that match our blocklist of adversarial patterns.

On the output side, model responses pass through filters that check for PII leakage using [Nightfall/Presidio/regex patterns], block responses containing system prompt content or internal identifiers, and verify that referenced data belongs to the requesting user's tenant.

Validation and filtering rules are updated after each penetration test and when new attack patterns are published. We log all filtered inputs and outputs for security review.

Growth-stage answer

Input validation and output filtering operate as independent pipeline stages, each with their own rule sets and monitoring. Input validation includes: length and format enforcement, injection pattern detection via ML classifier and rule-based patterns, encoded payload detection (base64, unicode, homoglyph attacks), and semantic analysis for adversarial intent.

Output filtering includes: PII detection and redaction through [Nightfall/Presidio], tenant data boundary verification against the requesting user's access scope, system prompt leakage detection, hallucination checks against retrieved source documents, and content policy enforcement.

Both pipelines are versioned and tested independently of the model. Filter bypass rates are tracked as a security metric, and any bypass triggers an incident review. Filter rules are updated through a defined process that includes testing in staging before production deployment.

Q: How do you protect system prompts from extraction or manipulation?

Early-stage answer

System prompts are stored as configuration outside the model context, managed in [environment variables/secrets manager] and injected at runtime. We don't include system prompts in any client-side code or API responses. Our output filtering checks for responses that reproduce system prompt content, using similarity matching rather than exact string matching to catch partial extraction.

We've tested our system prompt protections during penetration testing, including extraction attempts through indirect prompt injection and multi-turn conversations. System prompt changes go through code review and require approval from the engineering lead.

Growth-stage answer

System prompt protection operates at multiple levels. Storage: system prompts are managed as secrets in [AWS Secrets Manager/HashiCorp Vault], versioned, and access-controlled with audit logging. Runtime: prompts are injected server-side and never exposed to client code, API responses, or browser developer tools.

Detection: output filtering uses embedding-based similarity matching to detect partial system prompt reproduction, catching extraction attempts that rephrase or fragment the prompt content. We monitor for system prompt extraction attempts across conversation histories and flag patterns for security review.

Prompt changes follow our change management process: peer review, staging validation, security team approval for changes to security-relevant instructions. We conduct quarterly extraction testing as part of our AI red team exercises, and results inform filter tuning.

Q: How do you handle adversarial inputs designed to manipulate AI behavior?

Early-stage answer

Adversarial input handling starts with our input validation pipeline, which catches the majority of known attack patterns. For inputs that pass validation but produce unexpected model behavior, we implement output-side controls: response scope verification, data authorization checks, and content policy enforcement.

We maintain an internal library of adversarial test cases based on OWASP LLM Top 10 and published research, and we test against this library before deploying model or prompt changes. When we identify new adversarial patterns in production (through monitoring or user reports), we add them to our test suite and update our input validation rules within 3 days.

Growth-stage answer

Adversarial input defense combines prevention, detection, and response. Prevention: our input classifier is trained on a continuously updated dataset of adversarial patterns, including jailbreaks, role-playing attacks, encoding tricks, and indirect injection through retrieved content.

Detection: runtime monitoring tracks model behavior metrics (response length distribution, tool call patterns, data access patterns) and alerts on anomalies that may indicate successful adversarial manipulation. Response: confirmed adversarial bypasses trigger our AI incident response process, which includes immediate pattern blocking, impact assessment (what data was accessed, what actions were taken), and a post-incident review that produces updated defenses.

We run quarterly adversarial testing internally and semi-annual AI-focused pen tests through Adversis, using evolving attack methodologies rather than static test cases.

3. Data Isolation in AI Systems

What the buyer is evaluating: Whether their data stays separate from every other customer's data in your AI pipeline, from embedding through retrieval through response generation. Multi-tenant RAG is where "data bleed" happens: one missing filter in a vector search, and Customer A's proprietary data appears in Customer B's AI response. This is the AI-specific version of the tenant isolation question, and it's the one that kills deals fastest.

Q: How is customer data isolated in your AI/ML pipeline?

Early-stage answer

Customer data isolation is enforced at every stage of our AI pipeline. Data ingestion: customer content is tagged with the tenant ID at ingestion and stored in tenant-scoped partitions. Embedding: vector embeddings are generated per-tenant and stored with tenant ID metadata in our vector database [Pinecone/Weaviate/pgvector].

Retrieval: every vector search query includes a mandatory tenant filter that is enforced at the query layer, not the application layer, so retrieval cannot return another tenant's data regardless of the query content. Response generation: the LLM context window for each request contains only data from the requesting tenant.

We test tenant isolation in our AI pipeline as part of our penetration testing scope.

Growth-stage answer

Tenant data isolation in our AI pipeline is enforced architecturally, not just through application logic. Data is isolated at four stages. Ingestion: customer data is tagged with immutable tenant identifiers at the API gateway before reaching any AI component. Storage: vector embeddings are stored in tenant-partitioned namespaces in [Pinecone/Weaviate], with partition-level access controls that prevent cross-tenant queries at the database layer.

Retrieval: tenant filters are enforced at the vector database query level and validated by an authorization middleware that rejects any query without a valid tenant scope. Generation: LLM context construction is scoped to the authenticated tenant, with a post-assembly verification step that confirms all context documents belong to the requesting tenant.

Isolation is tested through automated integration tests on every deployment and through adversarial cross-tenant retrieval testing during quarterly AI security reviews.

Q: How does your retrieval-augmented generation (RAG) system enforce multi-tenant data separation?

Early-stage answer

Our RAG pipeline enforces tenant separation at the retrieval layer. When a user queries the system, the retrieval step applies a mandatory tenant filter before performing the vector similarity search. This filter is set by our authentication middleware using the authenticated user's tenant ID. It cannot be overridden by query content, prompt manipulation, or API parameters.

Retrieved documents include only content from the requesting tenant's namespace. We've tested this specifically for cross-tenant data leakage during our last penetration test, including attempts to retrieve other tenants' data through crafted queries and indirect prompt injection that attempts to modify retrieval scope.

Growth-stage answer

Multi-tenant RAG separation is enforced through a defense-in-depth approach. Retrieval: tenant-scoped namespaces in [Pinecone/Weaviate] provide database-level isolation, with each tenant's embeddings stored in a dedicated namespace that is physically separate from other tenants. Query construction: an authorization layer injects the tenant scope into every retrieval query, derived from the authenticated session, and validates that no query can execute without a tenant filter.

Post-retrieval validation: a verification step confirms that every retrieved document's tenant metadata matches the requesting tenant before documents enter the LLM context window. Monitoring: we track and alert on any retrieval query that returns zero results after tenant filtering but would return results without it, as this pattern can indicate a misconfiguration.

Cross-tenant retrieval testing runs automatically in our CI/CD pipeline and is included in our semi-annual AI penetration tests.

Q: Can customer data used by AI features be segregated from other customers' data at the infrastructure level?

Early-stage answer

Our standard multi-tenant architecture enforces tenant isolation through logical separation at the database level: tenant-scoped namespaces in our vector store and tenant-filtered queries in our application database.

For customers requiring infrastructure-level segregation, we can deploy dedicated vector database instances and isolated embedding pipelines per tenant. This dedicated infrastructure option is available for enterprise customers and is priced separately. Currently, 2 customers operate on dedicated AI infrastructure. Regardless of the deployment model, all AI operations are logged per-tenant and available for customer review.

Growth-stage answer

We offer two isolation tiers for AI infrastructure. Standard tier: logical tenant isolation through namespace-partitioned vector stores, tenant-scoped retrieval, and per-tenant encryption keys for stored embeddings. All standard-tier customers benefit from the same architectural isolation controls, tested quarterly.

Dedicated tier: physically separate AI infrastructure including dedicated vector database instances, isolated embedding pipeline workers, and dedicated model API endpoints. Dedicated-tier customers receive their own infrastructure in a separate [AWS account/VPC] with network-level isolation.

Both tiers enforce tenant-scoped audit logging and data retention controls. The isolation model for each customer is documented in their contract and validated during our annual SOC 2 audit.

Q: How do you prevent AI-generated responses from leaking data across tenants?

Early-stage answer

Cross-tenant data leakage prevention starts at the retrieval layer (tenant-filtered queries, as described above) and extends through response generation. Our output pipeline includes a tenant data boundary check that verifies any specific data, identifiers, or references in the model's response can be traced back to documents owned by the requesting tenant.

The model does not have persistent memory across sessions or tenants. Each request constructs a fresh context from the authenticated tenant's data only. We log all AI responses with their source document references, enabling post-hoc verification that no cross-tenant data appeared in responses.

Growth-stage answer

Cross-tenant leakage prevention is enforced at three levels. Context isolation: each AI request constructs a context window from scratch using only the authenticated tenant's data. No conversation history, cached embeddings, or model state carries over between tenants. Output validation: responses are checked against the source documents that informed them, and any response containing data that cannot be attributed to the requesting tenant's retrieved documents is blocked and logged as a security event.

Model architecture: we do not fine-tune shared models on individual customer data. Customers on the dedicated tier use isolated model instances.

Monitoring: we run automated cross-tenant leakage probes in production using synthetic test tenants with known unique markers. If a marker from test tenant A ever appears in test tenant B's responses, an alert triggers immediately. This canary system runs continuously and has produced zero positive detections since deployment.

4. Model Management & Supply Chain

What the buyer is evaluating: Whether you know what's running in your AI stack and how you evaluate it. Model provenance, update policies, and third-party model risk are the AI equivalent of vendor risk management. A company that can't name which models it uses or explain how model updates are evaluated signals that AI governance doesn't exist.

Q: What AI/ML models does your product use, and how do you evaluate their security?

Early-stage answer

We currently use [model names and providers, e.g., GPT-4o via OpenAI API, Claude via Anthropic API, and an open-source embedding model hosted on our infrastructure]. Our model inventory is documented in our architecture documentation and includes the model name, provider, version, purpose, data exposure scope, and last evaluation date.

Before adopting a new model, we evaluate: the provider's security posture (SOC 2, data handling policies, training data practices), the model's attack surface using OWASP LLM Top 10, and the data that will be exposed to the model in production. Each model provider is included in our vendor risk management process.

Growth-stage answer

Our model inventory is maintained in [GRC platform/internal registry] and covers all AI/ML models in production, staging, and development. Each entry documents: model name, provider, version pinned or range, deployment method (API or self-hosted), data classification of inputs, security evaluation date, and responsible team.

Model selection follows a defined evaluation process: security team reviews the provider's SOC 2 report and data processing agreements, engineering evaluates model behavior against our adversarial test suite, and the AI Review Board approves based on risk classification. Models are re-evaluated when providers release major updates, when our threat model changes, or on a semi-annual cycle. Model evaluation results are documented and retained in our GRC platform.

Q: How do you manage AI model updates, versioning, and rollback?

Early-stage answer

We pin model versions in our configuration (e.g., specific API model versions rather than aliases that auto-update). Before adopting a model version update, we run our AI test suite against the new version in staging, covering functional behavior, adversarial resistance (prompt injection test cases), and output quality. If the new version introduces regressions, we remain on the current pinned version until issues are resolved.

Rollback is handled through our standard deployment process: reverting the model version configuration and redeploying. Model version changes are tracked in our change log and go through code review. For third-party API models, we monitor provider release notes and deprecation timelines.

Growth-stage answer

Model versioning follows a defined lifecycle. All model versions are pinned in our configuration management system with no auto-upgrades. Version updates follow our change management process: the ML engineering team evaluates the update in staging, running our full AI test suite (functional, security, bias, performance). Security testing includes our adversarial test suite, cross-tenant isolation verification, and output validation checks.

Updates are deployed progressively, starting with internal testing, then a canary deployment to a subset of traffic, then full rollout. Rollback is automated: if monitoring detects anomalies in model behavior metrics during canary deployment, the system automatically reverts to the previous version.

Model version history is maintained with full audit trails, including the evaluation results that justified each version transition.

Q: How do you evaluate the security of third-party AI/ML models and APIs?

Early-stage answer

Third-party AI model providers go through our vendor risk management process. We review their SOC 2 Type II report, data processing agreement, and published security documentation.

For AI-specific evaluation, we assess: what data is retained by the provider, whether inputs are used for model training (we require opt-out), what data residency options are available, and the provider's incident response process. We've configured our API integrations to use the most restrictive data settings available (e.g., zero data retention where offered).

Provider security posture is re-evaluated annually and when significant changes are announced. We currently use 2 third-party AI providers, all with current security assessments.

Growth-stage answer

Third-party AI model and API evaluation follows a structured process managed by our security team. Pre-adoption assessment covers: SOC 2 Type II review with AI-relevant controls evaluated specifically, data processing agreement review by legal (covering training data exclusion, retention policies, and subprocessor transparency), API security configuration audit (authentication, rate limiting, encryption), and adversarial testing of the model through our standard test suite.

Ongoing monitoring includes: quarterly review of provider security updates and incident disclosures, annual full reassessment, and automated monitoring of API behavior for unexpected changes. Provider contracts require breach notification within 72 hours and include right-to-audit clauses.

All assessments are documented in our GRC platform with risk ratings and tracked findings. Our AI Review Board approves new provider relationships and reviews annual reassessments.

Q: Do you maintain an AI Bill of Materials (AIBOM) or equivalent inventory?

Early-stage answer

We maintain an AI component inventory in Notion that documents all AI/ML components in our product: models used (provider, version, purpose), AI-related libraries and frameworks in our dependency tree, vector databases and embedding pipelines, and third-party AI APIs. The inventory is updated when we add or change AI components, and reviewed quarterly for accuracy.

It's not yet structured as a formal AIBOM following a standard schema, but it covers the core elements: what AI components we use, where they come from, what data they access, and who owns them. We're tracking AIBOM standardization efforts and plan to adopt a formal format when industry consensus emerges.

Growth-stage answer

We maintain a structured AI inventory that covers the components defined in emerging AIBOM frameworks. The inventory is managed in [GRC platform/internal registry] and includes: model identifiers (provider, name, version, license), training data provenance (where known from provider documentation), AI libraries and frameworks with version pinning, vector databases and embedding pipeline components, third-party AI API integrations, and fine-tuning datasets (where applicable).

Each component has an assigned owner, risk classification, last assessment date, and dependency mapping showing which product features rely on it. The inventory is updated through our change management process: any PR that adds or modifies an AI component triggers an inventory update.

Quarterly audits reconcile the inventory against deployed components. The AIBOM is available for enterprise customers during security evaluations.

5. AI Audit Trails & Explainability

What the buyer is evaluating: Whether you can prove what your AI did and why. "Scattered logs with no way to prove what agents actually said" is the reality at most companies. Enterprise buyers need audit trails for compliance, incident response, and their own regulatory requirements. If you can't reconstruct what the AI decided, why, and what data it accessed, the buyer's compliance team will flag it.

Q: What audit trails exist for AI/ML system decisions and outputs?

Early-stage answer

Every AI interaction in our product generates an audit record containing: timestamp, authenticated user and tenant, input provided, model used (name and version), documents retrieved (with IDs and tenant ownership), the full model response, and any actions taken based on the response.

These records are stored in [CloudWatch Logs/Datadog/dedicated audit store] with the same retention period as our application audit logs (365 days/months). Audit records are indexed by tenant and user, enabling us to reconstruct any AI interaction for incident investigation or customer inquiry. We currently log AI interactions for security and debugging purposes and are building a customer-facing audit view for enterprise accounts.

Growth-stage answer

AI audit trails are implemented as a first-class system component, not bolted on to application logging. Every AI operation generates a structured audit event containing: request metadata (timestamp, user, tenant, session, feature context), full input chain (user input, system prompt version hash, retrieved documents with IDs), model execution details (model name, version, parameters, latency), complete output (raw model response, post-processing applied, final delivered response), and any downstream actions triggered.

Audit events are written to an append-only audit store in [S3 with Object Lock/immutable storage] with 2-month retention, meeting compliance requirements for [SOC 2/ISO 27001/customer contractual requirements]. Events are queryable through our audit platform with tenant-scoped access controls. Enterprise customers can access their own AI audit logs through our admin dashboard or API export.

Q: How do you ensure AI decision explainability and reproducibility?

Early-stage answer

For our RAG-based features, we maintain a clear attribution chain: each AI response can be traced back to the specific documents retrieved and provided as context. We surface source references in the product UI so users can verify the AI's output against the source material.

For reproducibility, our audit logs capture the model version, prompt template version, and retrieved document set for each interaction. Given the non-deterministic nature of LLMs, exact reproduction isn't guaranteed, but we can reconstruct the inputs that led to any given output. We use low temperature settings where precision matters and document our model parameter choices in our architecture documentation.

Growth-stage answer

Explainability and reproducibility are built into our AI pipeline design. Explainability: every AI response includes source attribution, linking output claims to specific retrieved documents. For features where AI influences decisions (recommendations, risk scores, categorization), we provide structured explanations that describe the inputs, retrieved context, and reasoning factors.

Reproducibility: we capture complete execution context (model version, prompt template hash, retrieval parameters, temperature and sampling settings, and the exact document set retrieved) for every interaction. While LLM outputs are inherently non-deterministic, capturing the full input state allows us to reproduce the conditions that generated any output and evaluate whether the response was reasonable given those inputs.

Explainability requirements are defined per feature based on the feature's risk classification, with higher-risk AI features requiring more detailed attribution and explanation.

Q: What is the retention period for AI operation logs and decision records?

Early-stage answer

AI operation logs are retained for 36 months, matching our standard application log retention policy. This includes the full audit record for every AI interaction: inputs, outputs, model version, and retrieved documents. Logs are stored in [CloudWatch Logs/S3] with the same encryption and access controls as our other application logs.

For customers with specific retention requirements, we can discuss extended retention as part of the enterprise agreement. Log deletion follows our standard data retention schedule, and customers can request deletion of their AI interaction data under our data deletion process.

Growth-stage answer

AI audit records follow a tiered retention policy. Full interaction records (inputs, outputs, model details, retrieved documents) are retained for 36 months in queryable storage, then archived to cold storage for 12 additional months. Summary records (metadata without full content) are retained for 36 years to support long-term audit requirements.

Retention periods are configurable per customer for enterprise agreements, supporting customers with specific regulatory requirements (HIPAA, financial services). Retention is enforced through automated lifecycle policies in [S3/equivalent], and deletion is logged. Customers can request early deletion of their AI interaction data through our standard data deletion process, which is completed within 30 business days and confirmed in writing.

Q: How do you monitor AI model performance, drift, and anomalous behavior?

Early-stage answer

We monitor AI model performance through [Datadog/CloudWatch/custom dashboards] tracking: response latency, error rates, output length distribution, retrieval relevance scores, and user feedback signals (thumbs up/down where implemented). We've defined baseline metrics for normal model behavior, and alerts fire when metrics deviate significantly from baselines.

For drift detection, we compare weekly performance metrics against our baselines and investigate meaningful changes. We don't yet have automated drift detection for model output quality, but our monitoring covers the operational and behavioral signals that indicate when something has changed. We review AI performance metrics weekly in our engineering standup.

Growth-stage answer

AI monitoring covers three dimensions: operational, behavioral, and security. Operational: latency, throughput, error rates, and cost per interaction are tracked in [Datadog] with SLO-based alerting. Behavioral: output quality metrics (retrieval relevance scores, response coherence, hallucination rate via automated evaluation, user feedback signals) are tracked against per-model baselines, with drift alerts when metrics exceed defined thresholds.

Security: prompt injection attempt rates, filter bypass rates, anomalous access patterns (unusual query volumes, off-hours usage, bulk data retrieval through AI features), and cross-tenant retrieval anomalies are monitored with security-specific alerting. Dashboard views are available for engineering, security, and product teams.

Monthly AI performance reviews evaluate trends and determine whether model updates, prompt adjustments, or control changes are needed. Anomaly investigations follow our incident response process.

6. Agent Permissions & Autonomy

What the buyer is evaluating: Whether your AI agents are over-permissioned, and whether you've thought about what happens when they do something unexpected. 90% of AI agents are over-permissioned. The Glean agent that downloaded 16 million files while all other users combined accessed 1 million is the example that keeps CISOs up at night. Buyers want to know: what can your agents access, how are permissions scoped, and what happens when things go wrong?

Q: What can your AI agents access, and how are their permissions scoped?

Early-stage answer

Our AI agents operate under a defined permission model. Each agent has a documented scope that specifies exactly which APIs, data stores, and actions it can access. Permissions are derived from the authenticated user's access level. The agent cannot access data or perform actions that the user themselves couldn't perform through the standard product interface.

Agent permissions are implemented through our existing authorization layer using scoped API tokens, not through separate agent-specific credentials with broader access. We maintain a permissions matrix for each agent documenting its access scope, and this matrix is reviewed when agent capabilities change.

Our architecture follows the principle described in IBAC (Intent Based Access Control, ibac.dev): permissions are derived from user intent and enforced deterministically at every tool invocation.

Growth-stage answer

Agent permissions follow an Intent Based Access Control (IBAC) architecture, as described at ibac.dev. Permissions are derived from the user's declared intent for each interaction, scoped to the minimum data and actions needed for that specific task, and enforced deterministically by an authorization engine at every tool invocation.

Agents do not hold standing permissions. Each tool call made by an agent is authorized individually against the user's access scope and the declared intent. Our authorization engine evaluates: does the user have access to this resource? Is this action within the scope of the current intent? Does this action exceed defined rate or volume limits?

Agent permission boundaries are defined in code, reviewed during security design reviews, and tested through automated integration tests that verify agents cannot exceed their scoped access. The full permissions matrix is available for enterprise security evaluations.

Q: What kill switches or circuit breakers exist for AI agent operations?

Early-stage answer

We implement rate limiting and scope boundaries for AI agent operations. Per-session limits cap the number of tool calls, API requests, and data volume an agent can process in a single interaction. If an agent hits these limits, the operation is paused and the user is notified.

Administrators can disable AI agent features per-tenant through our admin dashboard, effective immediately. We can disable agent capabilities globally through a feature flag in [LaunchDarkly/equivalent] within minutes.

For our production environment, we monitor agent activity metrics and have alerts for anomalous behavior: unusual tool call volumes, access to resources outside the user's normal patterns, or error rate spikes in agent operations.

Growth-stage answer

Circuit breakers operate at three levels. Per-interaction: hard limits on tool calls (some number per interaction), data volume retrieved (some MB), and execution time (some seconds). Exceeding any limit terminates the agent operation and logs the event. Per-tenant: administrators can disable agent features instantly through the admin console. Rate limits prevent any single tenant's agents from consuming disproportionate resources.

Global: engineering can disable agent capabilities across the platform within seconds through feature flags in [LaunchDarkly], with a documented runbook for when to use this control. Monitoring triggers automatic circuit breaker activation when agent behavior metrics exceed defined thresholds: if a single agent session attempts more than N tool calls or accesses more than N resources, the session is terminated automatically and a security alert is generated.

All circuit breaker activations are logged and reviewed. The circuit breaker thresholds are tuned quarterly based on normal usage patterns.

Q: How do you handle AI agent actions that could be irreversible?

Early-stage answer

Actions with side effects (creating, modifying, or deleting data; sending communications; calling external APIs) require explicit user confirmation before the agent executes them. The agent presents the proposed action with a clear description of what will happen, and the user must approve before execution. This confirmation step is enforced at the application layer, not through prompt instructions to the model.

For operations classified as destructive (deletions, bulk modifications), we implement a preview mode where the agent shows what would be affected before any action is taken. Irreversible actions are logged with the user's confirmation timestamp for audit purposes.

Growth-stage answer

Agent actions are classified into three tiers based on reversibility and impact. Read-only actions (queries, searches, data retrieval) execute without additional confirmation. Reversible write actions (creating draft content, making changes with undo capability) execute with notification to the user and automatic logging.

Irreversible or high-impact actions (deletions, external communications, financial transactions, bulk data modifications) require explicit user confirmation through a structured approval flow that shows the exact action, affected resources, and impact assessment. Action classification is defined in our authorization engine configuration, not in agent prompts.

All agent-initiated actions are logged with action type, approval status, executing user, and result. For enterprise customers, action tier thresholds are configurable, allowing security teams to require confirmation for action categories that match their internal policies.

Q: How do you manage OAuth tokens and API keys used by AI agents?

Early-stage answer

AI agents access external services through OAuth tokens and API keys managed by our application, not held by the agent directly. Tokens are stored in [AWS Secrets Manager/encrypted database] and accessed by the agent runtime through our service layer. Agent requests to external services go through our API gateway, which handles credential injection, so tokens are never present in the agent's context or visible to the model.

OAuth tokens are scoped to the minimum permissions needed for the agent's documented capabilities. Token expiration and refresh are handled by our credential management layer. We audit which agents use which credentials and review the access scope quarterly.

Growth-stage answer

Agent credential management follows a zero-trust architecture. No AI agent directly holds or sees credentials. Tokens and API keys are managed in [AWS Secrets Manager/HashiCorp Vault] with access controlled through our service layer. When an agent needs to call an external service, the request flows through our API gateway, which injects the credential at the network layer after authorization checks confirm the agent's action is within scope.

OAuth tokens are requested with minimum scopes, use short-lived access tokens (1-hour maximum), and refresh tokens are stored with encryption at rest. Credential usage is logged per-agent, per-session: which credential was used, what action was performed, and what data was accessed.

Anomalous credential usage (unusual call volumes, access outside normal hours, requests to endpoints not in the agent's documented scope) triggers security alerts. Credentials are rotated on a defined schedule and immediately upon any suspected compromise.

7. AI-Specific Compliance

What the buyer is evaluating: Whether you've mapped your AI features to emerging compliance frameworks, or whether you're assuming SOC 2 covers it. Schellman says explicitly that "SOC 2 is not intended to be a comprehensive AI risk management framework." ISO 42001 is new enough that having it, or credibly working toward it, puts you ahead of 95% of competitors. Buyers want to know which frameworks apply to your AI features and what your timeline looks like.

Q: Are you pursuing ISO 42001 certification or alignment?

Early-stage answer

We're currently aligning our AI governance practices to ISO 42001 requirements, though we have not started a formal certification process. We've completed a gap assessment against ISO 42001 control areas and identified N gaps, primarily in formal documentation and process maturity. Our AI governance policy, risk assessment process, and acceptable use policy were developed with ISO 42001 alignment in mind.

Our roadmap targets formal ISO 42001 readiness assessment by Q3 next year, with certification to follow based on customer demand and resource availability. We've prioritized alignment over certification because the underlying controls matter more than the certificate at our current stage.

Growth-stage answer

We are [actively pursuing ISO 42001 certification / aligned to ISO 42001 with certification planned for quarter/year. Our AI management system covers the full scope of ISO 42001: AI policy and governance, risk management, AI system lifecycle management, data management, and monitoring. We completed a formal readiness assessment with our audit firm in Q1, which identified 3 gaps now being addressed.

Our AI management system documentation is maintained in our GRC platform with the required records for leadership commitment, risk treatment, and operational controls. Key controls include our AI Impact Assessment process, model inventory, bias evaluation framework, and AI incident response procedures.

Our existing SOC 2 Type II and ISO 27001 programs provide the information security foundation, and ISO 42001 extends these specifically to AI system management.

Q: How do you map AI-specific controls to existing compliance frameworks (SOC 2, ISO 27001)?

Early-stage answer

We've extended our SOC 2 controls to cover AI-specific risks. This includes adding AI model management to our change management process, incorporating AI data flows into our data handling controls, adding prompt injection testing to our vulnerability management scope, and including AI-specific risks in our risk register.

We recognize that SOC 2 wasn't designed as an AI risk framework, so we supplement it with OWASP LLM Top 10 as our AI-specific threat model and NIST AI RMF categories for risk assessment. Our auditor is aware of our AI features and has included AI-relevant controls in our last examination. We maintain a mapping document showing how AI-specific risks map to our existing SOC 2 trust service criteria.

Growth-stage answer

We maintain an explicit control mapping between our AI-specific controls and SOC 2, ISO 27001, and ISO 42001. The mapping is documented in our GRC platform and covers: AI governance mapped to SOC 2 CC1 (Control Environment) and ISO 27001 A.5 (Policies), AI risk management mapped to SOC 2 CC3/CC4 and ISO 27001 A.8, AI data controls mapped to SOC 2 CC6 (Logical Access) and ISO 27001 A.8, AI monitoring mapped to SOC 2 CC7 (System Operations) and ISO 27001 A.12, and AI incident response mapped to SOC 2 CC7.4 and ISO 27001 A.16.

Controls that are AI-specific and not adequately covered by existing frameworks (adversarial defense, model supply chain, agent permissions) are managed under our ISO 42001 alignment program with NIST AI RMF as the reference. This mapping is reviewed during each audit cycle and updated when frameworks are revised. Our auditor evaluates AI-specific controls as part of our annual SOC 2 Type II examination.

Q: What preparations are in place for the EU AI Act and other AI-specific regulations?

Early-stage answer

We've completed an initial assessment of our AI features against EU AI Act risk categories and determined that our current AI features classify as [limited/minimal risk]. We've documented this classification with the supporting rationale. For limited-risk obligations (transparency requirements), we've implemented user-facing disclosures that AI-generated content is AI-generated.

We're monitoring regulatory developments through our legal team and [industry groups/counsel] and maintaining a regulatory tracking document that maps emerging AI regulations to potential product impacts. Our product roadmap includes provisions for additional transparency and governance controls as regulatory requirements become final.

Growth-stage answer

EU AI Act preparedness is managed as a cross-functional program involving Legal, Product, Engineering, and Security. We've completed a formal risk classification of all AI features under the EU AI Act framework, documented in our AI inventory. Current classification: [list risk levels for major features]. For each classification, we've mapped the applicable requirements and assessed our current compliance.

We've implemented: transparency disclosures for all AI-generated content, user opt-out mechanisms, human oversight capabilities for higher-risk features, and documentation of training data provenance where applicable.

We're tracking additional AI regulations globally ([UK AI framework, NIST AI RMF voluntary commitments, state-level US regulations]) through our legal team with quarterly regulatory landscape reviews. Our AI governance framework was designed to be adaptable to emerging requirements, and our GRC platform tracks regulatory mapping alongside our control framework.

Q: How do you address AI-specific requirements in cyber insurance policies?

Early-stage answer

Our cyber insurance policy through [carrier] covers our AI features under the technology errors and omissions section. During our last renewal, we disclosed our AI capabilities and confirmed coverage for AI-related claims, including AI-generated output errors and AI data handling incidents.

We've reviewed our policy for AI-specific exclusions and confirmed that [our current policy does not exclude AI-related claims / we negotiated removal of an initial AI exclusion]. As AI-specific insurance products emerge, we're evaluating whether supplemental coverage is appropriate. Our broker monitors the evolving AI insurance landscape and advises us on coverage adequacy during annual renewals.

Growth-stage answer

AI risk coverage is addressed explicitly in our insurance program. Our cyber liability and tech E&O policies cover AI-related claims, and we've obtained written confirmation from our carrier that coverage extends to: AI-generated output errors, AI data handling incidents, AI system security breaches, and third-party AI model failures.

During our last renewal, we provided our insurer with a detailed AI risk profile covering our AI governance framework, model inventory, security controls, and incident history. We've worked with our broker to ensure our policy language does not contain AI-specific exclusions that would create coverage gaps.

As AI insurance products mature, we evaluate supplemental AI-specific coverage annually. Our AI incident response process includes insurance notification procedures, and our General Counsel coordinates with the insurance carrier on any AI-related claims or incidents.