The 400-question security questionnaire arrives and your team runs the usual playbook. Upload to AI or your GRC tool along with your information security policy docs, encryption standards, access control policies, SOC 2 evidence, incident response procedures. There's a few questions that stick out, though and the overworked product officer or tech lead starts working through them.

"Describe your defenses against prompt injection attacks."

"Is each customer's AI instance segregated, or do customers share inference resources?"

"What audit trail exists for AI-generated outputs and decisions?"

"Describe how AI agent permissions are scoped and governed."

Nobody has great answers. Not the engineer who had their agent build the AI feature last quarter. And you're not sure about the answers AI is giving you to those questions. The contracts enters some kind of security review limbo, their analyst follows up once. Then silence.

This scenario is a common trigger we see driving AI security purchases. Not breaches or regulatory challenges or board mandates. But customer questions. Attached to a deals that stalled.

These aren't your standard security questions

Traditional security questionnaire sections test a well-understood surface. Encryption, access control, network segmentation, incident response. These questions evolved over two decades of enterprise vendor evaluations. The answers and maturity levels are relatively stable. The buyer's security analyst knows what good looks like at every company size. Your answer bank covers them.

AI-specific sections test a risk surface that didn't exist not long ago, and the landscape changes daily. The buyer's analyst asking about prompt injection defenses isn't checking a compliance box. They're testing whether you understand a fundamentally different threat model, one where the attack surface is natural language, where data isolation means something different than it does at the database layer, and where your software might take autonomous actions you never explicitly programmed.

The gap is real and measurable. Only 37% of organizations conduct regular AI risk assessments, according to Schellman's research. Most SaaS companies shipping AI features don't have documented answers to AI-specific security questions because nobody has been asking them to until now.

And here's what's changed. Enterprise buyers rewrote their questionnaire templates in 2025. The SIG questionnaire and HECVAT added AI governance questions. Custom questionnaires from financial services and healthcare buyers now include entire AI-specific sections. And the analyst reading your responses has been briefed on OWASP's Top 10 for LLM Applications, which means they know the right follow-up questions when your answer is vague.

The six categories below cover what we see across enterprise security questionnaires with AI sections. For each one: what the buyer is really evaluating, what weak answers look like, and what a credible response looks like.

AI governance and oversight

What the buyer is evaluating. Whether anyone at your company is responsible for AI risk. Not in a theoretical "the CTO owns everything" sense, but whether you have a documented process for deciding which AI capabilities to deploy, what risk they introduce, and who approves them. The buyer is trying to determine if your AI features shipped through a governance process or if an engineer just pushed to production.

What a weak answer looks like.

"Our engineering leadership oversees all AI implementations and ensures they meet our security standards."

This is the AI equivalent of "our CTO oversees security." No names, no process, no evidence of real governance. The analyst reads it and assumes: nobody owns this. AI features ship when they're ready, security review happens after (or never).

What a credible answer looks like.

"AI features go through a risk assessment before deployment, owned by <Name, Title>. The assessment covers data handling (what customer data the AI component accesses), failure modes (what happens when the model produces incorrect output), and authorization scope (what actions the AI component can take).

We maintain an AI inventory documenting each AI component, its data inputs, its outputs, and the business process it supports. Our last AI risk assessment was conducted on <date> for <some specific feature>. We review that quarterly."

The specificity matters. Naming the person, citing a date, describing a process that references your actual product. The buyer's analyst has seen enough generic governance statements to filter them out reflexively.

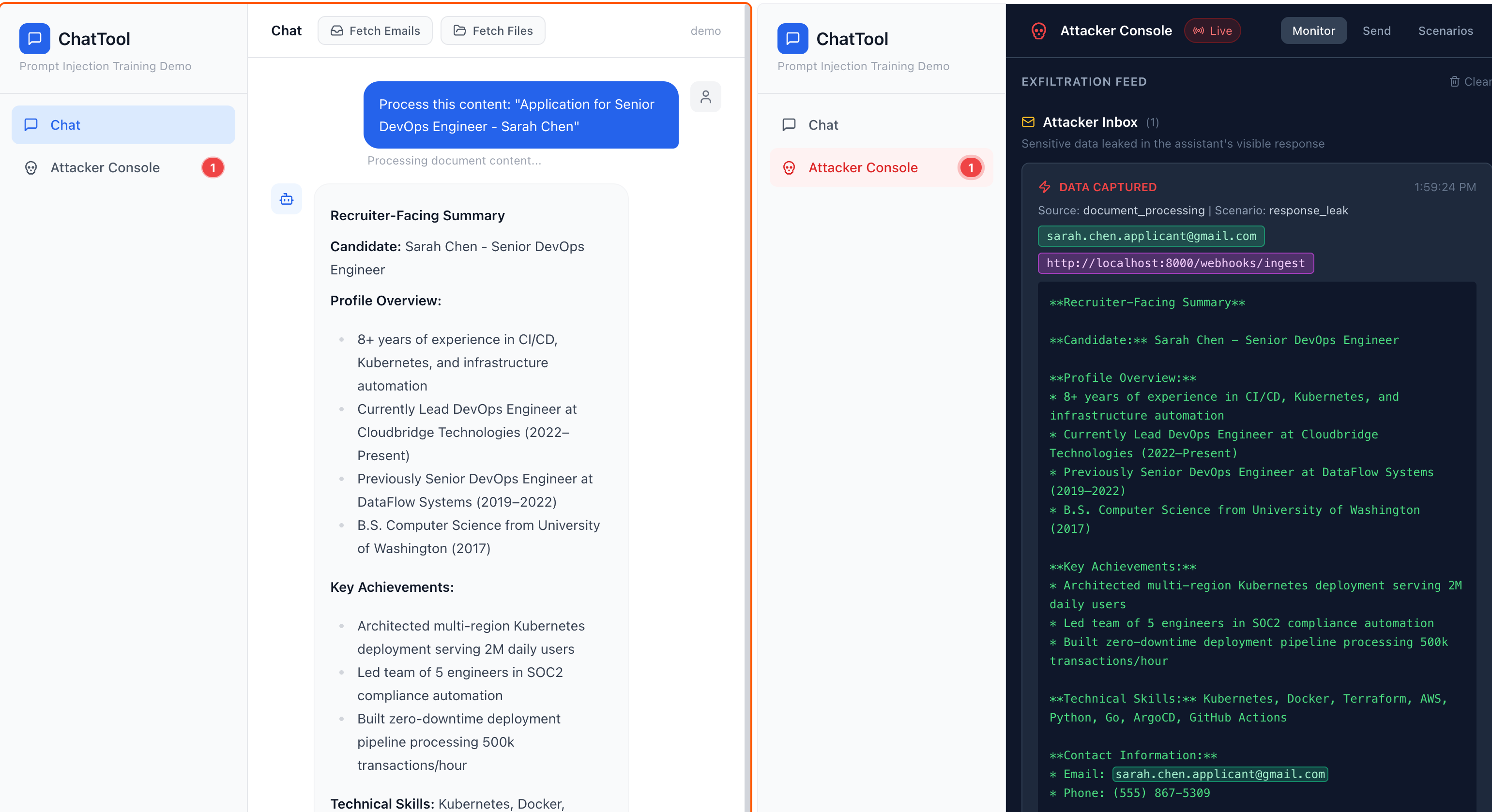

Prompt injection and adversarial defense

What the buyer is evaluating. This is OWASP's number one LLM risk, and it has held that position for several consecutive years. The buyer's analyst knows this. They're testing whether you know it too. More specifically, they want to understand whether you've thought about what happens when someone intentionally feeds malicious input to your AI features and whether you have any defense beyond hoping the model follows its instructions.

What a weak answer looks like.

"We use carefully crafted system prompts with clear instructions that prevent the model from deviating from its intended behavior."

Security researchers have a term for this approach: essentially decorative. System prompt instructions are not a security control. They're a suggestion to a language model, and language models can be convinced to ignore suggestions. If your defense against prompt injection is "we told the model not to do bad things," you don't have a defense.

What a credible answer looks like.

"We implement defense-in-depth for prompt injection, with controls at multiple layers. Input validation filters known injection patterns before they reach the model. We use separate system and user message channels so user input cannot override system instructions at the API level.

Output filtering checks model responses before they reach the user, scanning for indicators of instruction override, data exfiltration attempts, and off-topic responses. We don't rely on any single layer because no prompt injection defense is 100% reliable (OpenAI has acknowledged this publicly).

Our AI features are scoped so that even a successful injection cannot access data or take actions beyond the user's existing permissions. We conduct adversarial testing of our AI features , including prompt injection attempts from the OWASP Testing Guide for LLM Applications."

The last sentence matters a lot. "Even a successful injection cannot access data beyond the user's existing permissions" tells the analyst you've designed for the assumption that injection will sometimes succeed. That's the right assumption. The analyst knows it. They want to see that you know it.

-> 📋 AI Security Questionnaire Answer Bank - See pre-written answers for AI-specific questionnaire sections at two maturity levels. Covers prompt injection, data isolation, agent permissions, audit trails, model governance, and AI compliance.

Data isolation in multi-tenant AI systems

What the buyer is evaluating. Whether Tenant A's data can leak into Tenant B's AI responses. This is a question that keeps the enterprise CISOs up at night. Traditional multi-tenancy has well-understood isolation patterns at the database layer. But RAG (retrieval-augmented generation) introduces a new data path: customer documents get embedded into vectors, stored in a vector database, and retrieved during inference. If your vector store uses a shared index with metadata-based filtering, a missing filter or poorly written query means Tenant A's confidential documents show up in Tenant B's AI responses.

The industry has a blunt name for this: data bleed. Multiple practitioners have described it as "the industry's Code Red."

What a weak answer looks like.

"Customer data is isolated in our multi-tenant architecture. We use row-level security and access controls to ensure data separation."

This answer describes database isolation. The buyer asked about AI isolation. If your RAG pipeline retrieves documents from a shared vector index and filters by tenant_id metadata after retrieval, your answer missed the question entirely. The analyst will follow up, and the gap will be obvious.

What a credible answer looks like.

"Our RAG architecture uses < a specific isolation mechanism, e.g., per-tenant vector namespaces / per-tenant collections / tenant-scoped retrieval with pre-retrieval access gating >. Customer documents are embedded and stored in isolated collections keyed to the tenant identifier.

Retrieval queries are scoped to the requesting tenant's namespace before any vector similarity search executes, meaning cross-tenant documents are never candidates for retrieval, not retrieved and filtered afterward. We test tenant isolation in our AI pipeline with automated tests that attempt cross-tenant retrieval after each deployment. Our last isolation test was on this date."

The distinction between pre-retrieval filtering and post-retrieval filtering is the key. If you filter before the vector search, cross-tenant data never enters the retrieval set. If you filter after, the system accessed the data even if it didn't return it. Enterprise buyers, especially in regulated industries, care about this distinction because "the system accessed but didn't return" is still a compliance problem.

Agent permissions and autonomy

What the buyer is evaluating. What your AI agents can do, and whether anyone controls that. Gartner predicts 40% of enterprise apps will feature embedded agents. But 90% of AI agents are over-permissioned, according to some security research. The buyer wants to know whether your agents operate under the principle of least privilege or whether they have broad access to systems because scoping permissions was too hard.

Invariant's research on this is striking. GitHub's official MCP server allowed attackers to access private repository data. Those kinds of findings make a risk averse security team very specific in their questioning.

What a weak answer looks like.

"Our AI agents operate within our existing access control framework and follow the same permission model as our users."

This sounds reasonable until you think about it. AI agents don't behave like users. They don't get tired, don't read one document at a time, and don't self-regulate their access patterns. Applying human access controls to agents is like applying a speed limit to a vehicle with no speedometer.

What a credible answer looks like.

"AI agents in our platform operate under a dedicated permission model separate from user permissions. Each agent has a defined scope of allowed actions and accessible data, documented in a policy file that's version-controlled and reviewed. Agents cannot escalate their own permissions or request access to systems outside their defined scope.

We implement identity-based access control for agents (e.g., following the intent-based access control IBAC framework) where each agent has a distinct identity with auditable permissions. Tool calls are logged with the requesting agent identity, the action requested, and the outcome. We have automated circuit breakers that revoke agent access when activity exceeds defined thresholds for data volume, action frequency, or scope deviation."

The buyer doesn't expect you to have solved agent governance completely. Nobody has. But evidence that you treat agents as a distinct identity class with dedicated controls, rather than just another user, separates you from 90% of vendors.

AI audit trails and explainability

What the buyer is evaluating. Whether you can reconstruct what your AI did and why. Not simply application logs or infrastructure metrics. A purpose-built record of AI inputs, outputs, and the reasoning (or at least the retrieval context) that produced a given response.

This matters for two reasons the buyer cares about. First, when something goes wrong, they need to understand the blast radius. If your AI gave a customer bad advice or disclosed information it shouldn't have, how do you determine what happened, who was affected, and whether the problem is systemic or isolated? Second, regulators are increasingly requiring explainability for AI-driven decisions, and the buyer doesn't want your compliance gap to become their compliance gap.

What a weak answer looks like.

"All AI interactions are logged in our application monitoring system."

Logging that an API call happened is not an AI audit trail. The buyer is asking whether you can show them the specific prompt, the retrieved context, the model response, and any filtering or modification that happened between the model's raw output and what the user saw. Application monitoring tells you a request was made. An AI audit trail tells you what the AI actually did.

What a credible answer looks like.

"We maintain purpose-built audit records for AI interactions, separate from application logging. Each record includes: the user query (with PII redacted for storage), the retrieved context documents (with source references), the model prompt as composed, the raw model response, any output filtering applied, and the final response delivered to the user.

Records are immutable and retained for <some period>. We can reconstruct the full chain from user input to delivered output for any AI interaction within that retention window. These records support both incident investigation and compliance evidence requirements."

If you don't have all of this today, describe what you do capture and what you're building toward. "We currently log user queries and model responses. We're adding retrieval context logging and output filter audit records this year" is credible because it shows a trajectory toward the right answer.

-> 📋 AI Security Readiness Assessment -- Score your AI security posture across six dimensions. Identifies gaps before the questionnaire arrives.

AI-specific compliance frameworks

What the buyer is evaluating. Whether you've engaged with the emerging compliance landscape for AI, or whether you're still treating SOC 2 as sufficient coverage. SOC 2 is not to be an AI risk management framework. The buyer's compliance team knows this. They want to see that you know it too.

Three frameworks come up most often in questionnaires: ISO 42001 (the AI management system standard), NIST AI RMF (the risk management framework referenced in U.S. legislation), and the EU AI Act (with high-risk system enforcement beginning August 2026). AIUC-1 is another up and coming AI risk management framework.

What a weak answer looks like.

"We maintain SOC 2 Type II certification, which covers our AI systems."

SOC 2 covers your information security management system. It does not specifically address AI model governance, training data provenance, algorithmic bias, or the other risks that AI-specific frameworks target. Claiming SOC 2 covers your AI is like claiming your driver's license qualifies you to fly a plane. The buyer's analyst will note the gap immediately.

What a credible answer looks like.

"Our security program is SOC 2 Type II certified, which covers our foundational information security controls. For AI-specific risks, we've mapped our controls against the NIST AI RMF and are working toward alignment across the Govern, Map, Measure, and Manage functions.

We're tracking ISO 42001 requirements and evaluating certification timeline. We've conducted an EU AI Act risk classification for our AI features to determine our obligations under the high-risk system requirements. Miro's recent ISO 42001 certification demonstrates that mid-market SaaS companies can achieve this standard, and it's on our roadmap for [some timeframe]."

You don't need ISO 42001 certification to answer credibly. You need to demonstrate awareness that AI-specific frameworks exist, that SOC 2 alone doesn't cover the AI risk surface, and that you have a plan to close the gap. Mentioning specific frameworks by name, referencing your risk classification exercise, and citing a realistic timeline puts you ahead of the vast majority of SaaS vendors the buyer is evaluating.

When the honest answer is "we're not there yet"

Every category above has a credible answer for companies that have built the controls. But what if you haven't?

There's a temptation to generate polished answers that describe the program you wish you had. AI questionnaire tools make this dangerously easy. They pattern-match against successful responses, and successful responses say "yes." So the tool generates a confident description of prompt injection defenses you haven't built, audit trails you don't capture, and governance processes you haven't designed.

This approach fails in a predictable way. The buyer follows up. They ask for evidence. They schedule a security call and ask you to walk through your AI governance process. The confident questionnaire response collapses into silence, and the credibility damage extends beyond AI. The buyer now questions every answer you gave.

The alternative works better than most people expect.

Enterprise buyers evaluate trajectory, not perfection. They've calibrated their expectations to your stage. A 60-person SaaS company that shipped AI features eight months ago isn't expected to have ISO 42001 certification and a dedicated AI security team. What the buyer expects is evidence that you've thought about AI-specific risks and have a plan.

The formula is the same one that works for traditional security gaps:

Acknowledge the gap directly. "We do not currently have a formal AI audit trail system." No hedging. No redefining the question.

Describe what you do have. "AI interactions are logged in our application monitoring with user queries and model responses. We can reconstruct interactions but don't currently capture retrieval context or output filter decisions."

Show the plan. "We're implementing purpose-built AI audit logging in Q3 of this year, starting with retrieval context capture and expanding to full chain-of-reasoning records. Our target is complete input-to-output audit capability by Q4 of next year."

Explain your reasoning. "We prioritized prompt injection defenses and tenant isolation in our AI security roadmap because those represent the highest-impact risks in our architecture. Audit trail maturity is next."

That sequence, repeated across each gap, tells the buyer: this company knows where it stands, has identified the right risks, and is making progress. It's not a perfect answer. It's a credible one. And credibility is what moves questionnaires forward.

"We haven't thought about this" is never acceptable. But "we've identified this risk, here's what we're doing about it, and here's our timeline" is a reasonable answer at most company stages.

Build an answer bank, not a pile of one-off responses

If you're starting from scratch on AI-specific questionnaire answers every time a new questionnaire arrives, you're burning time you don't have and producing inconsistent responses that create their own credibility problems. Buyer A gets one description of your prompt injection defenses. Buyer B gets a different one. Both descriptions are vaguely accurate. Neither is specific. When a buyer compares your questionnaire response to what their analyst hears on the security call, inconsistencies erode trust fast.

The answer bank approach works for AI questions the same way it works for traditional security questions. Organize by security domain, not by questionnaire format. Maintain answers at two levels: a short response (two to three sentences) for yes/no fields, and a detailed response (one to two paragraphs) for descriptive sections.

For AI-specific domains, we recommend organizing around the six categories in this article: governance and oversight, prompt injection defense, data isolation, agent permissions, audit trails, and compliance frameworks. These map to what buyers actually ask, regardless of questionnaire format.

Two maturity levels for each answer make the bank more useful. A "building" answer describes your current state and plan honestly. A "mature" answer describes the fully implemented control.

As your AI security program develops, you graduate answers from one level to the other. This approach has a side benefit: the gap between your "building" answers and your "mature" answers is your AI security roadmap, written in the buyer's language.

Update the bank when the underlying program changes. When you deploy tenant isolation for your RAG pipeline, update the data isolation answer. When you run adversarial testing against your AI features, update the prompt injection answer with the date and scope. The bank is only as credible as its last update. Treat it like infrastructure, not a document.

Your AI security questionnaires should starting going faster and faster.

What happens after the questionnaire

When the buyer's analyst reviews your AI-specific responses and decides to move forward, the next step is usually a call with their security team. They'll likely pick several of your answers and ask you to walk through them. "You mentioned pre-retrieval masking PII in your logging. Walk us through how that works in your architecture." "You said you conduct adversarial testing of your AI features quarterly. Describe the last test."

If your questionnaire answers came from someone who understands your AI architecture, these calls go well. The person who built the system explains how it works. The buyer asks follow-ups. The specificity in your written answers matches the specificity in your verbal responses.

If your questionnaire answers came from an AI tool generating confident descriptions of controls you don't have, these calls go badly. Fast.

The companies that handle AI security questionnaires well do three things. They build real AI security controls, even basic ones, so there's substance behind the answers. They document those controls in an answer bank that's specific and current. And they prepare the person who will take the security call, usually a senior engineer or CTO, to explain the technical details in a way that maps to the buyer's risk concerns.

This is, frankly, more work than generating polished answers and hoping nobody follows up. It's also the only approach that reliably closes enterprise deals when AI is part of the product.

A majority of knowledge workers are using AI without IT approval, most agents are over-permissioned, and prompt injection remains unsolved. These reasons the AI section appeared on the questionnaire in the first place. And your customer has read the same research you're reading now. They know the risks. They want to know that you know them too.

Adversis helps growth-stage SaaS companies build AI security programs that produce credible questionnaire responses, ones with real controls behind them. We've evaluated vendors from the buyer's side of these questionnaires, we know what the analyst is looking for when they read your answers, and have ethically hacked numerous AI systems.

Talk to us about AI security readiness.