So your AI questionnaire tool just filled in 70% of a 627-question SIG Core in an afternoon. Encryption standards, access control policies, data retention, compliance certifications. It pulled from your policy documents, matched patterns to previous answers, and the responses look accurate!

But the remaining 30% are raising yellow flags in your mind.

"Do you have a dedicated CISO? Describe their responsibilities and reporting structure."

"Describe your SIEM implementation, including log sources, retention periods, and alerting capabilities."

"When did you last conduct an incident response tabletop exercise? Describe the scenario and outcomes."

"Provide a current network architecture diagram showing trust boundaries and data flows."

"Describe your Data Loss Prevention controls."

These are questions where the buyer's security analyst stops skimming. The easy 70% confirms you have a security program. The hard 30% reveals whether it works. And the analyst reading your responses has seen enough questionnaires to spot the difference between an answer that came from your operations and one that came from a language model.

AI handles questions with a single correct answer - yes, we encrypt at rest with AES-256; yes, we enforce MFA; yes, we have SOC 2 Type II. What it can't handle are questions that demand specificity about your operations, honesty about gaps, and the kind of credibility that comes from describing things as they are rather than as they should be.

Here are the five categories of hard questions that cluster across SIG, CAIQ, and custom questionnaires. For each: what the buyer is evaluating, what bad answers look like (including AI-generated ones), what credible answers look like, and what to build so the credible answer is true.

→Security Readiness Assessment - Not sure where you stand on the hard questions? This assessment maps your current program against what enterprise buyers evaluate.

Why AI questionnaire tools plateau at 70%

Vanta, Conveyor, Drata - these tools turned a week of copy-pasting pain from a shared Google Doc into an afternoon of pain. They match incoming questions against your approved answer library, pull evidence from connected systems, and produce first-pass responses that are accurate for policy-based questions. Do you have a password policy? What's your encryption standard? Are background checks conducted? These questions barely change between questionnaires, and AI tools handle them well. Burning your security lead's time on 400+ repeatable questions is waste. Automate the 70%.

The remaining 30% breaks AI because the answers aren't in your policy documents. They're in your operations - in your on-call rotation, your last tabletop exercise, your architecture diagram, the name of the person who owns security. When the honest answer is "we're working on it," the AI defaults to a polished affirmative instead.

Here's what that looks like in practice.

"We maintain a security monitoring program with real-time threat detection and automated incident response capabilities"

That sentence, generated for a growing SaaS company, rings false immediately to any analyst who reviews vendors for a living. They know if you had it, you'd describe it differently. They'd name the tools. They'd cite specific detection rules. They'd mention the on-call rotation. The generated version is vague because the truth underneath is vague.

The analyst (or their AI agent) has read thousands of responses. They can pattern-match AI-generated confidence the way you pattern-match a phishing email. The 70% that AI handles is table stakes. The 30% it can't is where the deal moves forward or stalls in review.

Five questions that decide

Across SIG, CAIQ, HECVAT, and custom questionnaires, the hard questions cluster into five categories. Each follows the same pattern: the buyer isn't asking whether you have the thing. They're assessing how mature your implementation is, and whether you've thought about the specific assets and systems whose compromise would cause real business harm.

1. Security leadership - "Do you have a dedicated CISO?" What's really being asked: is there a person whose job depends on your security program being real?

2. Security monitoring - "Describe your SIEM implementation." What's really being asked: if we get breached through your platform, will you know before we do?

3. Incident response maturity - "When did you last conduct an IR tabletop exercise?" What's really being asked: has anyone on your team practiced responding to a security incident, or does the plan exist only in a document?

4. Network architecture - "Provide a current network diagram." What's really being asked: do you understand your own attack surface?

5. Controls you haven't built yet - DLP, WAF, formal vulnerability disclosure programs. What's really being asked: when the answer is "no," can you be honest about it and show you have a plan?

A 40-person SaaS company isn't expected to have a full-time CISO and a $500K Splunk deployment. But the buyer has a calibrated sense of what the answer should sound like at your stage. They expect you to have thought about each of these areas and identified the specific systems and data that matter most. The questionnaire tests whether you've thought about it, not whether you've solved it.

We've spent years reviewing questionnaire responses during enterprise security evaluations and helping SaaS companies build programs that produce credible answers. The difference between responses that move forward and responses that stall almost always lives in these five categories.

"Do you have a dedicated CISO?"

SIG: Information Assurance - Security leadership and organizational structure.

This question appears on nearly every enterprise security questionnaire. SIG asks it directly. CAIQ probes it through governance questions. Custom questionnaires often expand it: "Describe your security organization, including reporting structure and responsibilities."

What the buyer is evaluating: Not the title. A buyer who has evaluated hundreds of vendors knows that a 50-person SaaS company probably won't have a full-time CISO. What they're looking for is evidence that someone specific owns the security program, that person has defined responsibilities, and there's a clear escalation path when something goes wrong. The title matters less than the structure.

Bad answer: "Our CTO oversees security." Five words, no detail, no evidence of a real program. The buyer reads this and infers: nobody owns security. The CTO has it on their list somewhere between hiring and product roadmap, and when a security incident happens, the response will be ad hoc.

AI-generated bad answer: "We maintain a dedicated security leadership function with executive oversight of our information security program, ensuring alignment with business objectives and regulatory requirements." No name, no structure, no specifics. The buyer's analyst has read this exact sentence - or something indistinguishable from it - in dozens of questionnaires. It's the security leadership equivalent of "passionate, results-driven team player" on a resume.

Credible answer: Name the person and describe the structure. What matters is specificity about who is responsible for the systems and data that your business depends on, less about abstract governance language.

If you have external security leadership: "Security program ownership sits with <Named Individual>, VP of Engineering, who is responsible for security policy, incident response coordination, and vendor risk management. We supplement internal leadership with a security advisory engagement through <Named Firm>, who provides strategic guidance on program maturity and compliance planning, and participates in enterprise buyer security calls. Our security advisor has authority to escalate security concerns independently of the engineering organization. Our roadmap includes a dedicated security hire at [specific milestone, e.g., Series B close or 75 employees]."

If you don't have external security leadership: "Security ownership sits with <Named Individual>, <their title>, who dedicates approximately X% of their time to security operations including policy management, vulnerability response, and access reviews. Security incidents escalate to <Named Individual> directly. We're evaluating external security advisory engagement to supplement internal capabilities, with a target start date of QXYYYY."

Both answers name a person, acknowledge the current state, and show a plan. The buyer reads these and thinks: they know where they are and where they're going.

What to build: A documented security ownership model with a named person and defined responsibilities. If you go the advisory engagement route, you get a named security leader for questionnaires and calls, strategic guidance, and someone who speaks the buyer's language. Even without external help, the structural answer - a specific person with defined responsibilities and an escalation path - is what moves the questionnaire forward. Whoever owns security should be able to articulate which specific systems matter most and why. Reciting a list of responsibilities from a job description isn't useful.

"Describe your SIEM implementation"

SIG: Cybersecurity Incident Management - Security monitoring and event management.

This is the question that makes CTOs sweat. They know SIEM means Splunk or something like it. They know they don't have Splunk. They're not sure what they have qualifies as an answer.

What the buyer is evaluating: Detection capability. If someone compromises credentials and starts exfiltrating data through your API in the middle of the night, what happens? The buyer isn't asking whether you have a specific product. They're asking whether you have visibility into security-relevant events and a process for acting on them.

Bad answer: "We use CloudWatch for monitoring." This conflates infrastructure monitoring with security monitoring. CloudWatch alerts on CPU spikes, disk usage, and application errors. That's operations monitoring. The buyer asked about security event monitoring - authentication failures, privilege escalations, unusual API patterns, infrastructure changes that could indicate compromise. Answering with "CloudWatch" tells the buyer you don't distinguish between the two.

AI-generated bad answer: "We employ a Security Information and Event Management solution for real-time security event monitoring, correlation, and automated threat detection across our infrastructure." Meanwhile, the reality is CloudWatch alerts for 500 errors and a Slack channel nobody checks on weekends. The buyer's analyst will follow up: "Which SIEM platform? What log sources feed into it? What's your mean time to detect?" If you can't answer those, the initial response falls apart.

Credible answer: Describe what you monitor, what triggers alerts, and what happens when they fire. Prioritize by what an attacker would target. Acknowledge gaps.

"We aggregate logs from the following sources into <some named platform, e.g., Datadog Security Monitoring, Panther, or a centralized CloudWatch Logs setup with security-specific metric filters>:

- Authentication events: failed login attempts, MFA bypass attempts, password resets from unusual locations

- Authorization events: privilege escalation attempts, cross-tenant data access attempts, admin role changes

- Infrastructure events: security group modifications, IAM policy changes, S3 bucket policy changes, new resource creation outside approved regions

- Application events: API rate limit violations, unusual data export patterns, bulk record access anomalies

Alerts route to our on-call engineering rotation via PagerDuty. Security-critical alerts (authentication anomalies, infrastructure permission changes) page immediately. Lower-priority alerts (rate limiting triggers, failed login thresholds) create tickets for next-business-day review.

Current gap: we don't have automated correlation across log sources - our detection is rule-based rather than behavioral. We're evaluating <specific platform or approach> for implementation in QX to address lateral movement detection and anomaly-based alerting."

That answer is credible because it's specific and honest. It describes real capabilities and acknowledges a real gap.

What to build: You don't need Splunk or a six figure SIEM contract. Start by identifying the 3-5 specific systems whose compromise would cause real business harm - your production database, your authentication service, your CI/CD pipeline, your cloud IAM configuration.

Then build monitoring around the realistic attack paths to those systems. CloudWatch Logs with security-specific metric filters and SNS alerting is a credible starting point for AWS-native companies. SaaS tools or something like Panther adds correlation. The tool doesn't determine credibility. What matters is that you've identified which events indicate an attacker approaching the systems that matter, you're watching for them, and someone responds when they fire.

A common mistake: monitoring everything equally. Companies set up logging and then drown in alerts, which means nobody responds to any of them, or set up so few as to be irrelevant. If your most valuable asset is a multi-tenant production database, the detection rules around database access patterns, IAM changes that could grant new access, and network changes that could expose the database matter far more than generic 5xx error monitoring.

Here's what this looks like for a specific system. Say your SaaS stores customer data in a multi-tenant RDS PostgreSQL instance. Your critical monitoring path:

- IAM layer: CloudTrail alerts on any

rds:ModifyDBInstance,rds:CreateDBSnapshot, oriam:AttachRolePolicyevents targeting your production DB role. These fire immediately to PagerDuty. - Network layer: VPC Flow Logs filtered for any non-application traffic to the RDS security group. If something other than your ECS tasks is talking to port 5432, you want to know.

- Application layer: Custom logging on any query that returns more than N rows from tenant-scoped tables, or any query that omits the tenant_id predicate entirely. This catches both compromised service accounts and application bugs that break tenant isolation.

- Access layer: Alerts on direct database connections via IAM authentication (as opposed to application-level connection pooling), which would indicate someone using stolen credentials to bypass the application.

Four detection rules focused on one critical asset. Set up the equivalent for your top 3-5 systems and you have a security monitoring program that a buyer's analyst will find credible. Total cost on AWS: the CloudWatch Logs you're already paying for plus a few metric filters and an SNS topic.

"When did you last conduct an IR tabletop exercise?"

SIG: Cybersecurity Incident Management - Incident response testing and exercises.

This question has the widest gap between what AI generates and what the buyer expects. AI tools produce well-structured answers about IR phases, roles, communication procedures, and escalation paths. None of that answers the question. The buyer asked about exercises. Not plans.

What the buyer is evaluating: Whether incident response is tested or just documented. Has anyone practiced? Have you discovered the gaps in your plan by running through a scenario? Or does the IR plan live in Confluence, untouched since it was written?

Buyers ask this because they know most early-stage companies have a plan and haven't tested it. They're not expecting a red-team-validated IR program. They're looking for evidence that someone has sat in a room and walked through a scenario.

Bad answer: "We have an incident response plan that is reviewed annually." Doesn't answer the question. The buyer asked about exercises. Restating that you have a plan signals that you either didn't read the question carefully or you're dodging it because the answer is "never."

AI-generated bad answer: "We maintain a formal incident response program with regular tabletop exercises designed to test our detection, containment, and recovery capabilities across multiple threat scenarios." The word "regular" is doing all the lifting, and it's not lifting much. When the buyer follows up with "when was the last one?" and the answer is a long pause, the credibility of every other answer you gave takes a hit.

Credible answer (you've done one): "We conducted a tabletop exercise on <some specific date> simulating <a specific scenario, e.g., compromised developer credentials leading to unauthorized access to production customer data>. Participants included <specific roles, e.g., VP Engineering, lead backend engineer, head of customer success, CEO>.

We found that our initial detection would have relied on a customer report rather than internal monitoring (we've since added alerting for the specific access pattern). Our customer communication plan had no template for data breach notification - we drafted one as a direct output of the exercise. We updated our IR plan to include <some specific change> and scheduled our next exercise for [date]."

Credible answer (you haven't done one): "We haven't conducted a formal tabletop exercise yet. Our IR plan was last reviewed and updated on [date]. We're scheduling our first tabletop for QX, focused on <specific scenario, e.g., compromised credentials leading to data exfiltration>. In the interim, our team has responded to N production incidents using our documented runbooks, which gave us practical experience with our escalation and communication procedures. Post-incident reviews are documented and have driven <some specific number> of updates to our incident response procedures."

The second answer is honest about the gap and credible about the plan. They haven't done it yet, but they know they need to, they have a specific plan, and they've tested their process informally through real incidents.

What to build: One tabletop exercise. Two hours. You can do this week.

Step 1: Pick a scenario grounded in your architecture. Don't simulate a generic "data breach." Pick the most likely path an attacker would take to reach your most valuable assets. Three scenarios that work for most SaaS companies:

- Compromised developer credentials: An engineer's GitHub personal access token is leaked in a public commit. The attacker uses it to access your CI/CD pipeline, injects a modified build artifact, and gains access to production secrets stored in your deployment environment.

- Third-party integration compromise: A vendor whose OAuth token has broad read access to your customer database is compromised. Their token starts making bulk API calls pulling customer records.

- Misconfigured storage: An S3 bucket backing your file upload feature is accidentally made public during a deployment. A security researcher finds it and submits a report to your security@ address (or tweets about it).

Step 2: Gather the people who would respond. Engineering lead, security owner, customer-facing lead, executive. Four people is enough. Don't let this become a scheduling exercise that delays it three months.

Step 3: Walk through it step by step. For each phase of the scenario, ask:

- How do we detect this? Through which mechanism? How long does detection take?

- Who gets notified? Through what channel? Is that channel monitored overnight?

- What do we contain first? Who has the access to do it?

- Who communicates with affected customers? Do we have their contact information? Do we have a notification template?

- What's our legal obligation? (State breach notification laws vary significantly in their timelines and requirements. Do you know which states your customers are in and what the notification deadlines are?)

Step 4: Document the exercise. Date, participants, scenario, decisions made, gaps discovered, specific plan updates. This document is your questionnaire answer.

One thing worth being honest about: a single tabletop exercise won't dramatically change your incident response capability. Evidence that tabletops improve response times is thin. What they reliably do is surface assumptions your team holds that aren't true - who has access to what, whether your communication plan has the right contact information, whether anyone knows where the production database credentials are stored. Those discoveries are the real value, and they're what the buyer cares about when they read your answer.

"Provide a current network diagram"

SIG: Network Security - Network architecture and segmentation.

This question trips up more people than any other in the hard 30%. The network architecture isn't necessarily hard to describe, but how many companies have a current diagram. They had one once - some whiteboard photo from when they set up their AWS environment. It predates the Kubernetes migration, the multi-region expansion, and the three microservices added last quarter.

What the buyer is evaluating: Whether you understand your own architecture. Where does data enter the system? Where does it leave? Where are the boundaries between public-facing and internal services? Where is encryption enforced? The buyer wants to see that you know how your system works, that you can represent what you have and identify where an attacker could move through it.

Bad answer: No diagram. Or a marketing architecture slide with product feature boxes that describe user workflow, rather than a data flow. Or a diagram from 18 months ago that shows a monolith when you've since decomposed into services. Or a pretty AI-generated diagram with questionable details. Each tells the buyer: nobody is maintaining architecture documentation, which means nobody has a clear picture of the attack surface.

AI-generated bad answer: "Our network architecture implements defense-in-depth principles with segmented network zones, encrypted communications between all components, and strict access controls governing inter-service communication." Descriptive prose where the buyer asked for a diagram. No visual, no specifics about how segmentation works, no trust boundary definitions.

Credible answer: A clear diagram that shows:

- VPC boundaries and account structure (if multi-account)

- Public and private subnet segmentation

- Data flow paths - how customer data enters, processes, stores, and (if applicable) exits

- External integrations with trust boundary annotations (what connects to what, and how authentication/authorization is enforced at each boundary)

- Encryption in transit indicators (TLS termination points, internal service mesh encryption if applicable)

- Where authentication and authorization are enforced - API gateway, service mesh, application layer

- Database and storage layer isolation (especially tenant isolation architecture)

For a typical early-stage SaaS company running on AWS, this might be a single VPC with public/private subnets, an ALB, an ECS or EKS cluster, an RDS instance, an S3 bucket, and a few external integrations. What matters is accuracy and currency.

What separates a diagram that answers the question from one that shows real security maturity: annotate the realistic attack paths. Where could an attacker with compromised credentials move laterally? If your application server is compromised, what else can it reach? Which network paths exist between your public-facing services and your database? These annotations show the buyer you think about architecture the way an attacker does - which is how their security team thinks about it too.

Here's what a credible diagram annotation looks like. Say you have an ECS cluster in a private subnet running your API, with an ALB in the public subnet and an RDS instance in an isolated subnet:

"Attack path concern: ECS task role has read/write access to the S3 customer-data bucket and network access to RDS on port 5432. If an application-level vulnerability allows code execution in the ECS task, the attacker inherits the task role's permissions. Mitigation: ECS task role is scoped to specific S3 prefixes per tenant. RDS access uses IAM database authentication with row-level security enforcing tenant isolation. We've restricted the task role from any IAM, STS, or EC2 actions to limit lateral movement."

That annotation, on a diagram, tells the buyer's security analyst more about your security maturity than 50 pages of policy documents.

Include a "last updated" date. The buyer checks this. A date within the last quarter signals active maintenance. No date, or a date from two years ago, signals neglect.

What to build: A living architecture diagram maintained alongside infrastructure changes. Draw.io, Lucidchart, or Mermaid in your docs repo all work. Mermaid has the advantage of version control alongside code, which makes updates more likely - you can add a CI check that flags PRs modifying Terraform or CloudFormation without a corresponding diagram update. The initial diagram takes an afternoon. Keeping it current takes discipline - update on architecture changes, review quarterly.

Pair the diagram with a one-page architecture narrative: components, data flows, trust boundaries, and security controls in plain language. The diagram answers the questionnaire question. The narrative prepares you for the follow-up call where the buyer asks you to walk them through it. On that call, being able to say "this is the path we're most concerned about, and here's what we've done to address it" tells the buyer's security team more than any polished document.

When the honest answer is "no"

Every other question in this piece has a "build this" answer. Security leadership - get a named owner. SIEM - set up log aggregation. IR exercises - run a tabletop. Network diagrams - draw one.

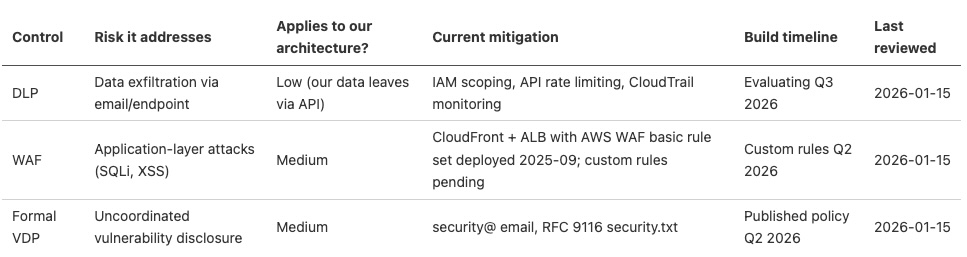

What do you do when the questionnaire asks about Data Loss Prevention and you don't have DLP? When it asks about your Web Application Firewall and you haven't deployed one? When it asks about your vulnerability disclosure program and you have a security@company.com address that goes to a shared inbox?

Before jumping to "build everything," ask a more basic question: does this control address a realistic path to the systems that matter? Not every control on a questionnaire carries equal weight. Some controls prevent common, well-documented attack techniques. Others exist because a framework committee added them.

DLP is a good example. For a SaaS company where data exfiltration risk comes primarily from API abuse or compromised service accounts, a traditional DLP product monitoring email and cloud storage is worse than security theater - expensive, operationally painful, and misaligned with where the data leaves. Restrictive IAM policies, API rate limiting, and audit logging of bulk data access may address the same risk more effectively for your environment.

When "yes" backfires. AI questionnaire tools default to affirmative answers. They're pattern-matching against successful responses, and successful responses say "yes." So when the questionnaire asks about your vulnerability disclosure program, the AI generates: "We maintain a responsible disclosure program with defined SLAs for reporter communication and a structured triage process for submitted vulnerabilities."

If your program is a security@ email that your CTO checks when he remembers, this answer won't hold up. The buyer may verify. They might submit a test to your disclosure program. They might ask on a call: "walk me through how a submitted vulnerability gets triaged." The confident "yes" becomes a credibility collapse that damages trust in every other answer you gave.

When the answer is "no" or "not yet," use this structure:

- Acknowledge the gap directly. "We do not currently have a formal DLP solution deployed." Don't hedge. Don't redefine the question. Don't describe something adjacent and hope the buyer doesn't notice.

- Describe what you do have that addresses the underlying risk. Specific measures tied to specific attack paths. "Data exfiltration risk is mitigated through restrictive IAM policies that limit data export to authorized service accounts, S3 bucket policies that prevent public access, and CloudTrail monitoring of bulk data access events. These controls address the most likely exfiltration paths for our architecture: compromised service accounts and misconfigured storage."

- Provide the implementation timeline, if you've decided the control is warranted. "We're evaluating DLP solutions with a target deployment in Q3 2026, focused initially on API-layer data export controls." Specific quarter, specific scope. If you've evaluated the control and decided it's not warranted for your architecture and threat surface, say that and explain why. A well-reasoned "we've evaluated this and it doesn't address our primary risks" is more credible than a vague promise to implement something you don't believe in.

- Explain the reasoning. "We prioritized API-layer monitoring over traditional DLP because our architecture's primary data exfiltration risk is through API endpoints, not email or endpoint file transfers." This shows the buyer you're making decisions based on your threat model over checking boxes.

Worked example: vulnerability disclosure programs. Most SaaS companies at this stage have a security@ address and nothing else. Here's what the honest answer looks like:

"We accept vulnerability reports at security@company.com. Reports are triaged by our VP of Engineering within 2 business days. We acknowledge receipt within 48 hours and provide status updates at 7-day intervals. We don't currently have a formal bug bounty program. Since January 2025, we've received 2 reports through this channel, 1 of which led to remediated findings (both low severity, related to HTTP security headers). We're evaluating a structured disclosure policy with published SLAs for Q2 2027."

What to build today: Create a /.well-known/security.txt file (per RFC 9116) pointing to your security@ address and linking to a one-page disclosure policy on your website. The policy should include: where to report, what to expect (timeline for acknowledgment, timeline for updates), safe harbor language (you won't pursue legal action against good-faith reporters), and scope (which assets are in scope, which aren't). This takes an afternoon. When the next questionnaire asks about your vulnerability disclosure program, you have a URL to point to instead of a sentence about a shared inbox.

About security awareness training and phishing simulations: These appear on nearly every questionnaire. Before you rush to implement a phishing simulation program, know that the evidence on their effectiveness is mixed at best. Research from ETH Zurich found that embedded phishing simulations may not reduce click rates over time and can have unexpected side effects that make employees more susceptible to phishing.

The honest and more effective answer may focus on structural controls - how your email system filters phishing, how your authentication architecture limits the damage from compromised credentials (hardware security keys, conditional access policies, limited session lifetimes). Structural controls that work even when people make mistakes are more credible than training programs that depend on perfect human judgment.

Not every gap carries the same weight. Some gaps are deal-breakers for specific buyers (healthcare companies may require DLP before contract). Some gaps are expected at your stage and a plan suffices. For expected gaps: acknowledge, describe your mitigations, plan. For potential deal-breakers: consider whether you can accelerate the build or whether this prospect requires maturity you can't credibly claim yet. Sometimes the honest assessment is "we're not ready for this buyer" - and that might be a better outcome than winning the deal with fabricated answers and getting into legal hot water.

What to build: A gap register, organized by which specific systems and data each gap exposes to risk. Document every control you know you're missing, the specific risk it's meant to address, whether that risk applies to your architecture, what you're doing instead, and the implementation timeline (if any). Review it quarterly. When a new questionnaire surfaces a gap you hadn't tracked, add it. The gap register becomes your security roadmap - prioritized by what an attacker would exploit in your specific environment.

Here's a practical format

That register does double duty: it's your internal security roadmap and your answer source for the "controls you haven't built yet" category.

Building the answer bank

The gap register handles the "not yet" answers. The answer bank handles everything else - and over time, the gaps close and the bank grows.

Most questions across SIG, CAIQ, and custom questionnaires overlap. Different wording, same substance. "Describe your encryption at rest" appears in every questionnaire. So does "describe your access control model" and "how do you handle security incidents."

Organize by security domain, not by questionnaire. SIG's domain structure works as a taxonomy, but what matters is that answers map to security topics rather than specific questionnaire formats. When a new questionnaire arrives, you're matching questions to domains instead of searching through old questionnaires for something similar.

For each domain, maintain answers at two levels: the short answer (1-2 sentences) for yes/no with explanation fields, and the full answer (1-2 paragraphs) for detailed descriptions. Both should be current. Both should be reviewed quarterly or when the underlying program changes.

One principle that makes the answer bank more credible over time: for each domain, document not just what you do, but why.

"We enforce MFA on all accounts" is a fact. "We enforce hardware security keys for all employees with production access because credential compromise is the most common initial access vector for our architecture, and phishable MFA methods (SMS, TOTP) still leave us exposed to real-time phishing proxies like Evilginx" shows the buyer your security program is built on reasoning. Answers grounded in "we do this because the evidence and our threat model support it" are harder for any analyst to challenge.

Your first enterprise questionnaire takes a week. Your fifth takes a day because your answer bank covers more ground. Each questionnaire surfaces questions you haven't seen before. Those answers get added. Over time, the bank covers the vast majority of what any buyer asks, and the remaining questions are genuinely novel ones specific to that buyer's industry or risk profile.

Treat the answer bank as operational infrastructure. When you deploy a new control, update the bank. When you run a tabletop, update the IR domain. When you change your architecture, update the diagram and associated answers. The answer bank is only as credible as its last update.

→ Security Questionnaire Answer Bank - Organized by SIG domain category with example answers at early-stage and growth-stage maturity levels. Covers the 10 categories enterprise buyers probe most.

Questionnaires are conversations, not exams

Buyers who send SIG questionnaires to growth-stage SaaS companies aren't expecting perfection. They've calibrated their expectations to your stage. What they're evaluating is whether you know your own security posture. Does this company understand which specific systems and data matter? Are they honest about gaps? Have they prioritized based on realistic threats rather than framework checklists?

The 30% of questions AI can't answer are the ones that reveal whether your security program is built on substance or compliance theater. A polished, AI-generated response that claims maturity you don't have shows a company optimizing for the wrong thing. A specific, honest response that names the person, describes the real capability, acknowledges the gap, and explains the reasoning shows a company that has done the work of figuring out what matters.

Companies that handle questionnaires well treat them as infrastructure. The answer bank, the architecture diagram, the documented tabletop, the security ownership model - these are program artifacts built around the specific assets whose compromise would cause real harm. Build the program around what matters. The answers follow.

The questionnaire is where the conversation starts. The security call is where it continues. Get the hard answers right, and you walk into that call with credibility already established - credibility built on specificity, honesty, and operational depth that no AI tool can generate.

→ Security Call Prep Guide - The 15 most common questions buyers ask after reviewing your questionnaire responses, with frameworks for credible answers.

Next time the SIG Core lands in your inbox, the 70% still takes an afternoon. The 30% has answers behind it - answers with names, dates, diagrams, and the kind of specificity that only comes from having identified what matters and done the work to protect it.

Your deal is in security review and the questionnaire just surfaced questions your AI tool can't handle.

Adversis helps growth-stage SaaS companies build the program behind those answers - the onesthat requires specificity, honesty, and operational credibility.

We start by identifying the specific systems and data that drive your business, then build the security program around what matters most.

We've been on the buyer's side of these evaluations, and that pattern recognition informs how we help SaaS teams build programs that survive scrutiny.

Talk to us about questionnaire readiness.